In the first part of this series, I identified four design goals for a learning platform that supports conversation-based courses. In the second part, I brought up a use case of a kind of faculty professional development course that works as a distributed flip, based on our forthcoming e-Literate TV series on personalized learning. In the next two posts, I’m going to go into some aspects of the system design. But before I do that, I want to address a concern that some readers have raised. Pointing to my apparently infamous “Dammit, the LMS” post, they raise the question of whether I am guilty of a certain amount of techno-utopianism. Whether I’m assuming just building a new widget will solve a difficult social problem. And whether any system, even if it starts out relatively pure, will inevitably become just another LMS as the same social forces come into play.

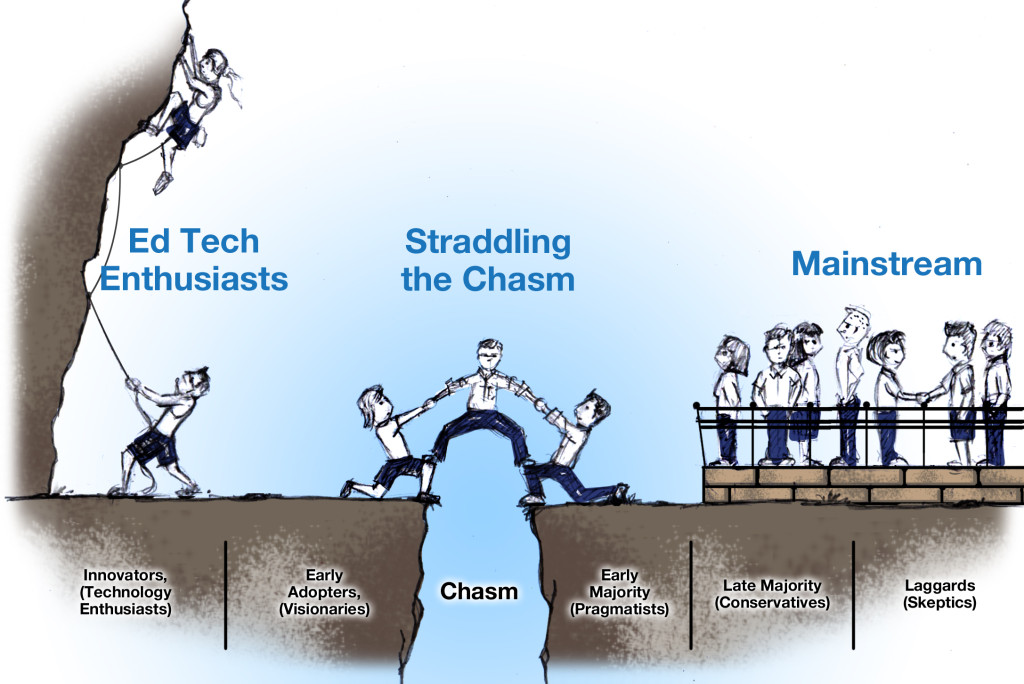

I hope not. The core lesson of “Dammit, the LMS” is that platform innovations will not propagate unless the pedagogical changes that take advantages of those changes also propagate, and pedagogical changes will not propagate without changes in the institutional culture in which they are embedded. Given that context, the use case I proposed in part 2 of this series is every bit as important as the design goals in part 1 because it provides a mechanism by which we may influence the culture. This actually aligns well with the “use scale appropriately” design goal from part 1, which included this bit:

Right now, there is a lot of value to the individual teacher of being able to close the classroom door and work unobserved by others. I would like to both lower barriers to sharing and increase the incentives to do so. The right platform can help with that, although it’s very tricky. Learning Object Repositories, for example, have largely failed to be game changers in this regard, except within a handful of programs or schools that have made major efforts to drive adoption. One problem with repositories is that they demand work on the part of the faculty while providing little in the way of rewards for sharing. If we are going to overcome the cultural inhibitions around sharing, then we have to make the barrier as low as possible and the reward as high as possible.

When we get to part 4 of the series, I hope to show how the platform, pedagogy, and culture might co-evolve through a combination of curriculum design, learning design, platform design, prepared for faculty as participants in a low-stakes environment. But before we get there, I have to first put some building blocks in place related to fostering and assessing educational conversation. That’s what I’m going to try to do in this post.

You may recall from part 1 of this series that trust, or reputation, has been the main proxy for expertise throughout most of human history. Credentials are a relatively new invention designed to solve the problem that person-to-person trust networks start to break down when population sizes get beyond a certain point. The question I raised was whether modern social networking platforms, combined with analytics, can revive something like the original trust network. LinkedIn is one example of such an effort. We want an approach that will enable us to identify expertise through trust networks based on expertise-relevant conversations of the type that might come up in a well facilitated discussion-based class.

It turns out that there is quite a bit of prior art in this area. Discussion board developers have been interested in ways to identify experts in the conversation for as long as internet-based discussions have grown large enough that people need help figuring out who to pay attention to and who to ignore (and who to actively filter out). Keeping the signal-to-noise ratio was a design goal, for example, in the early versions of the software developed to manage the Slashdot community in the late 1990s. (I suspect some of you have even earlier examples.) Since that design goal amounts to identifying community-recognized expertise and value in large-scale but authentic conversations (authentic in the sense that people are not participating because they were told to participate), it makes sense to draw on that accumulated experience in thinking through our design challenges. For our purposes, I’m going to look at Discourse, an open source discussion forum that was designed by some of the people who worked on the online community Stack Overflow.

Discourse has a number of features for scaling conversations that I won’t get into here, but their participant trust model is directly relevant. They base their model on one described by Amy Jo Kim in her book Community Building on the Web:

The progression, visitor > novice > regular > leader > elder, provides a good first approximation of levels for an expertise model. (The developers of Discourse change the names of the levels for their own purposes, but I’ll stick with the original labels here.) Achieving a higher level in Discourse unlocks certain privileges. For example, only leaders or elders can recategorize or rename discussion threads. This is mostly utilitarian, but it has an element of gamification to it. Your trust level is a badge certifying your achievements in the discussion community.

The model that Discourse currently uses for determining participant trust levels is pretty simple. For example, in order to get to the middle trust level, a participant must do the following:

- visiting at least 15 days, not sequentially

- casting at least 1 like

- receiving at least 1 like

- replying to at least 3 different topics

- entering at least 20 topics

- reading at least 100 posts

- spend a total of 60 minutes reading posts

This is not terribly far from a very basic class participation grade. It is grade-like in the sense that it is a five-point evaluative scale, but it is simple like a the most basic of participation grades in the sense that it mostly looks at quantity rather than quality of participation. The first hint of a difference is “receiving at least 1 like.” A “like” is essentially a micro-scale peer grade.

We could also imagine other, more sophisticated metrics that directly assess the degree to which a participant is considered to be a trusted community member. Here are a few examples:

- The number of replies or quotes that a participant’s comments generate

- The number of mentions the participant generates (in the @twitterhandle sense)

- The number of either of the above from participants who have earned high trust levels

- The number of “likes” you get for posts in which a participant mentions or quotes another post

- The breadth of the network of people with whom the participant converses

- Discourse analysis of the language used in the participant’s post to see if they are being helpful or if they are asking clear questions (for example)

Some of these metrics use the trust network to evaluate expertise, e.g., “many participants think you said something smart here” or “trusted participants think you said something smart here.” But some directly measure actual competencies, e.g., the ability to find pre-existing information and quote it. You can combine these into a metric of the ability to find pre-existing relevant information and quote it appropriately by looking at posts that contain quote and were liked by a number of participants or by trusted participants.

Think about these metrics as the basis for a grading system. Does the teacher want to reward students who show good teamwork and mentoring skills? Then she might increase the value of metrics like “post rated helpful by a participant with less trust” or “posts rated helpful by many participants.” If she wants to prioritize information finding skills, then she might increase the weight of appropriate quoting of relevant information. Note that, given a sufficiently rich conversation with a sufficiently rich set of metrics, there will be more than one way to climb the five-point scale. We are not measuring fine-grained knowledge competencies. Rather, we are holistically assessing the student’s capacity to be a valuable and contributing member of a knowledge-building community. There should be more than one way to get high marks at that. And again, these are high-order competencies that most employers value highly. They are just not broken down into itsy bitsy pass-or-fail knowledge chunks.

Unfortunately, Discourse doesn’t have this rich array of metrics or options for combining them. So one of the first things we would want to do in order to adapt it for our use case is abstract Discourse’s trust model, as well as all the possible inputs, using IMS Caliper (or something based on the current draft of it, anyway). There are a few reasons for this. First, we’d want to be able to add inputs as we think of them. For example, we might want to include how many people start using a tag that a participant has introduced. You don’t want to have to hard code every new parameter and every new way of weighing the parameters against them. Second, we’re eventually going to want to add other forms of input from other platforms (e.g., blog posts) that contribute to a participant’s expertise rating. So we need the ratings code in a form that is designed for extension. We need APIs. And finally, we’d want to design the system so that any vendor, open source, or home-grown analytics system could be plugged in to develop the expertise ratings based on the inputs.

Discourse also has integration with WordPress which is interesting not so much because of WordPress itself but because the nature of the integration points toward more functionality that we can use, particularly for analytics purposes. The Discourse WordPress plugin can automatically spawn a discussion tread in Discourse automatically for every new post in WordPress. This is interesting because it gives us a semantic connection between a discussion and a piece of (curricular) material. We automatically know what the discussion is “about.” It’s hard to get participants in a discussion to do a lot of tagging of their posts. But it’s a lot easier to get curricular materials tagged. If we know that a discussion is about a particular piece of content and we know details about the subjects or competencies that the content is about (and whether that content contains an explanation to be understood, a problem to be solved, or something else), then we can make some relatively good inferences about what it says about a person’s expertise when she makes a several highly rated comments in discussions about content items that share the same competency or topic tag. Second, Discourse has the ability to publish the comments on the content back to the post. This is a capability that we’re going to file away for use in the next part of this series.

If we were to abstract the ratings system from Discourse, add an API that lets it take different variables (starting with various metadata about users and posts within Discourse), and add a pluggable analytics dashboard that let teachers and other participants experiment with different types of filters, we would have a reasonably rich environment for a cMOOC. It would support large-scale conversations that could be linked to specific pieces of curricular content (or not). It would help people find more helpful comments and more helpful commenters. It could begin to provide some fairly rich community-powered but analytics-enriched evaluations of both of these. And, in our particular use case, since we would be talking about analytics-enriched personalized learning products and strategies, having some sort of pluggable analytics that are not hidden by a black box could give participants more hands-on experience with how analytics can work in a class situation, what they do well, what they don’t do well, and how you should manage them as a teacher. There are some additional changes we’d need to make in order to bring the system up to snuff for traditional certification courses, but I’ll save those details for part 5.

[…] Blueprint for a Post LMS Part One, Two, Three […]