Despite much talk about the demise of the LMS market, the end is nowhere in sight. Unlike many of the newer learning platform concepts (e.g. MOOCs, free platforms, unbundled learning platforms), the LMS market has an established business model and real revenues. Just today came news of an investment analysis report predicting that total LMS market (higher ed, corporate training, K-12) would triple in revenue by 2018, moving from $2.6B to $7.8B. The LMS ain’t sexy, but it’s still important.

Edutechnica

George Kroner, a former engineer at Blackboard who now works for University of Maryland University College (UMUC), has developed what may be the most thorough measurement of LMS adoption in higher education at Edutechnica (OK, he’s better at coding and analysis than site naming). This side project (not affiliated with UMUC) started two months ago based on George’s ambition to unite various learning communities with better data. He said that he was inspired by the Campus Computing Project (CCP) and that Edutechnica should be seen as complementary to the CCP.

The project is based on a web crawler that checks against national databases as a starting point to identify the higher education institution, then goes out to the official school web site to find the official LMS (or multiple LMSs officially used). The initial data is all based on the Anglosphere (US, UK, Canada, Australia), but there is no reason this data could not expand.

Here’s what is interesting with this data-driven approach:

- The data already goes beyond just the US market, which has been a real challenge for market analysis.

- The coverage is for the vast majority of institutions rather than a sample. For the US, it is all institutions with more than 2,000 enrollment, but for the others (UK, Canada, Australia) it is complete coverage of all institutions identified in official databases.

- Given the tie-in to official databases, the data can go beyond count of institutions and be scaled by enrollment.

- The data already includes additional information such as version number and whether the LMS is self-hosted or not.

- Due to the light touch, the data can be collected more frequently (several times per year). Eventually this will allow for longitudinal data on LMS migrations.

Of course no data set of this type can be 100% clean, and the Edutechnica site relies on partially-manual cleanup to remove pilot programs and small-scale usage of LMSs. The script counts an LMS if it is the official system at the college or above level. If an LMS is just used by a few faculty or a program, then it is removed. Over time George has indicated that the script is being updated based on the results of the manual process to automatically do more of the cleaning of data.

The plan is to keep the site updated and free, with no plans to either share the institutional data or to charge for it. The reason for not sharing the full data set is to avoid a direct-marketing campaign by vendors.

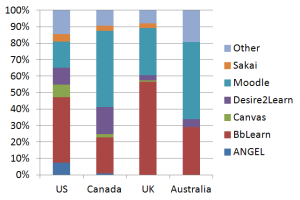

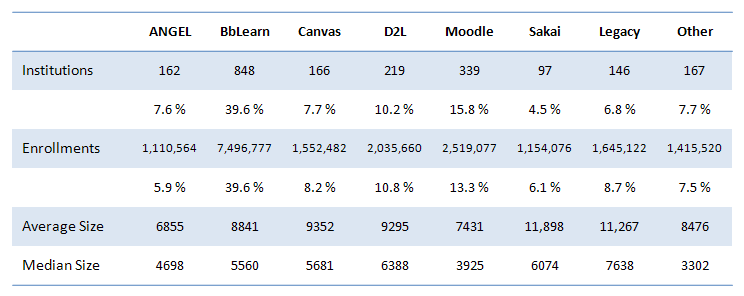

What are some of the results? The first blog post shared the following LMS adoption numbers:

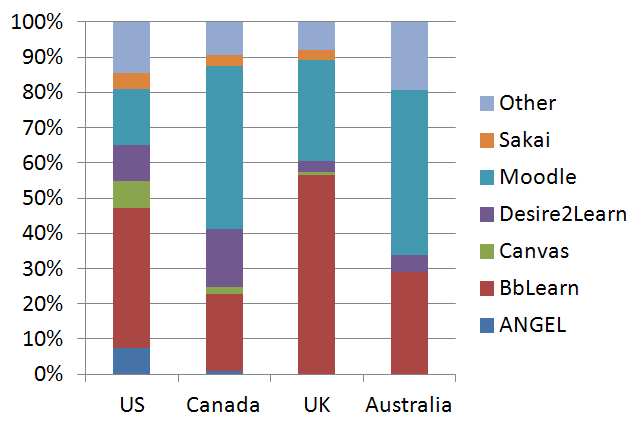

Given the data coverage, there is also interesting comparisons by country:

There is also some interesting data on product versions used and hosting status that is worth checking out.

LISTedTECH

LISTedTECH was created by Justin Menard, who is Business Intelligence Senior Analyst at University of Ottawa. I was not able to interview Justin, but the about page describes the site and data. First of all, the site is far broader in scope than just the LMS – and there are a ton of useful visualizations based on his dataset. It seems that most data is presented in Tableau for interactive visualizations. For this post I’ll just share some LMS data as images, but head on over for more. It seems like the first data was presented in 2012, but I could be wrong here (Justin, waiting for you in the comments).

Version 1.0

In its first version it was a simple blog with lists and graphs. I had used data and research that I and co-workers had accumulated at work. The site was up about two months before I basically got a cease and desist. I had used part of a legal text from another website and hadn’t taken out there name (Oupsi). They then accused me a copying there data. No chance in hell.After coming back from vacation (this happened when I was at Disney World during our xmas vacation), I met with a colleague (who is also a lawyer) at work and asked advice. She told me that since I was just doing this for fun (and they had money and lawyers), it would be simple to just shut it down. So I did.

Version 1.1

After licking my wounds (more like pride), I started discussing this with a friend and colleague. I came to the conclusion that I could not let this go (again pride or stubbornness). I started working on the data and adding links to the data I had in the DB. My goal was to redo the site but with proof of where the data could be found. It also made me realise how much data was out there and how much work this would be if I was to do it right.Version1.2…alpha

Since money is not an issue (I’m helping a wealthy Nigerian family move a very large sum of money out of the country) I opted for Open source systems and modules. The version that is live is a simple Drupal 7 installation. My goal is a simple one. See if it’s worth pursuing this project.

The site is now in beta with version 1.3.

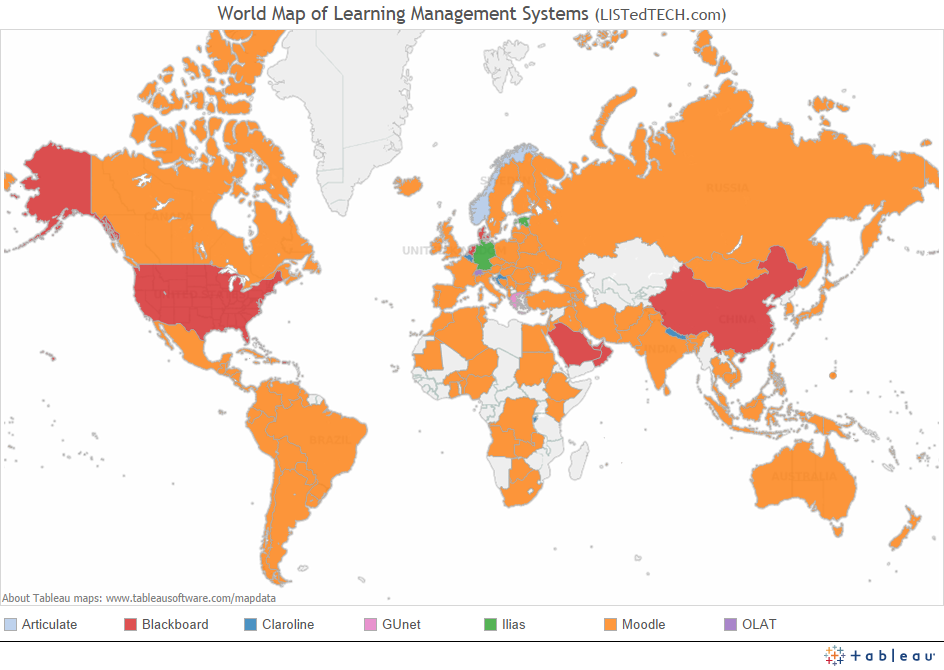

While Justin does not attempt to get 100% coverage, there are over 5,600 institutions in his database. In one post he mapped out the market-leading LMS per country:

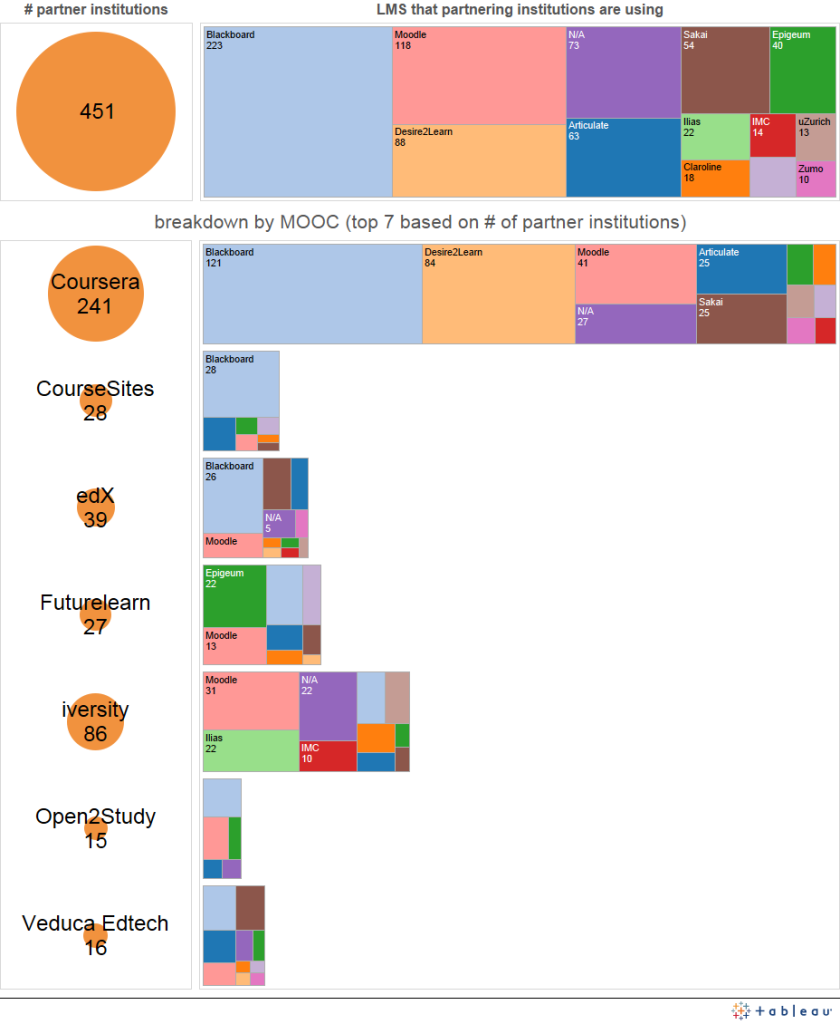

In another post, he mapped the 451 partner institutions from the major MOOC vendors to determine the identity of their official LMS:

Update: This is not to say that the MOOC runs on the selected LMS as a platform, but that the rest of the school (outside of MOOC usage) runs a particular LMS.

Kudos

Both of these sites are great, relatively new, sources of information on the LMS market.

Update: I should point out that no one to my knowledge has independently verified the accuracy of the data in these sites. In order to gain longer-term acceptance of these data sets, we will need some method to provide some level of verification.

Great resources – thanks, Phil!

Couple problems with EduTechnica data. The vast amount of Liberal Arts colleges in the US have less than 2,000 FTEs so they are excluded entirely in his analysis. Also, hard to believe his institutional total actually represents 100% of educational institutions in represented countries (even foregoing the previous remark).