I wrote a series of posts last fall about security testing for higher ed LMS products. In my initial post I called for more transparency.

We need more transparency in the LMS market, and clients should have access to objective measurements of the security of a solution. To paraphrase Michael Feldstein’s suggestions from a 2009 post:

- There is no guarantee that any LMS is more secure just because they say they are more secure

- Customers should ask for, and LMS vendors should supply, detailed information on how the vendor or open source community has handled security issues in practice

- LMS providers should make public a summary of vulnerabilities, including resolution time

I would add to this call for transparency that LMS vendors and open source communities should share information from their third-party security audits and tests. All of the vendors that I talked to have some form of third-party penetration testing and security audits; however, how does this help the customer unless this information is transparent and available. Of course this transparency should not include details that would advertise vulnerabilities to hackers, but there should be some manner to be open and transparent on what the audits are saying.

Subsequently I was asked by Instructure to serve as an embedded reporter as they undertook a public security audit. In a post from January 2012, Josh Coates called for other LMS vendors to follow suit.

Despite the lack of response, Instructure declared their intent to test again in fall 2012.

We will kick off our second annual open security audit in Q4. We invite any and all education companies to participate. We think education should be open, safe, and secure — and that corporations should be held accountable for their claims.

Second Annual Audit for Canvas LMS

True to their word, Instructure conducted another audit this year, again using Securus Global, described in this blog post and documented in this Securus report. I did not act as an embedded report this time around, but I would like to highlight some of the findings from the report.

Overall, the security posture of the environment is reasonable, with several high and medium vulnerabilities being identified in the application during testing. However, as a result of reporting of issues promptly and regular status updates, all high risk issues were resolved with a number of moderate and low risk vulnerabilities also being resolved.

The Canvas platform has largely been developed with security in mind, as most forms and user supplied input are heavily validated and restricted. The platform was developed on top of the Ruby on Rails MVC framework which provides a strong security baseline. There are, however, some improvements that could be made to the code base that could reduce the likelihood of introducing new vulnerabilities in the future.

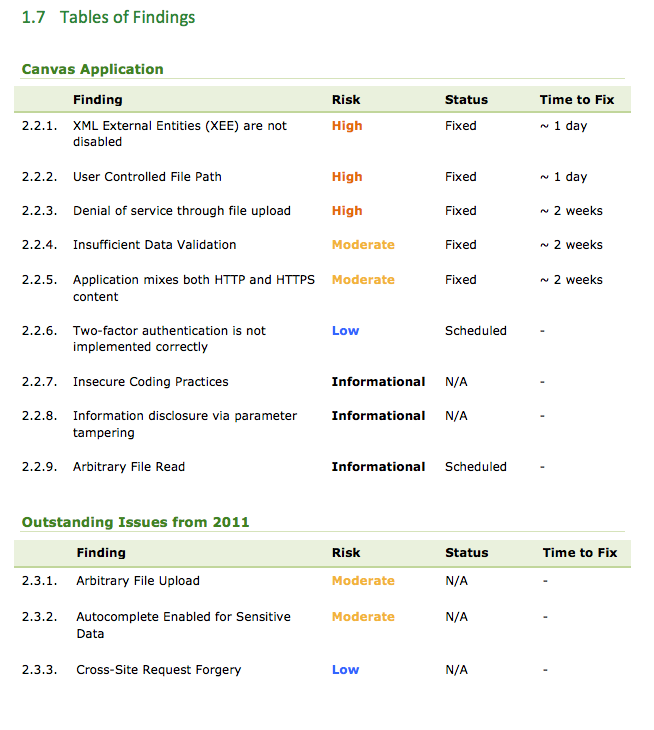

As expected, Securus did find a number of security vulnerabilities. Each vulnerability is rated by Securus in decreasing order of perceived risk as Critical, Critical, High, Moderate, or Low. This risk is based on assessment of the likelihood and consequence of particular vulnerabilities being exploited. Securus Global states that they follow the International Standards ISO 31000 and ISO 31010 for risk identification, classification and assessment.

During this year’s testing, there appears to be 6 issues identified, with 0 Critical, 3 High, 2 Moderate, 1 Low. Securus also identified 3 Informational items for Instructure to consider in the future. Like last year, Securus verified the remediation of most of the fixes. This last point is important for two reasons:

- By not reporting until the critical and high risk items have been remediated, the public testing removes most of the risk of publicizing security audit results; and

- There is now an independent source to verify the fixes.

Transparent Process

I’ll let the results of the Canvas testing speak for themselves, but I would like to further comment on why this process is good for the ed tech community based on a real case.

In September the Utah Education Network had a security incident where students had permissions to change view and grades, as described in the Utah Statesman.

A set of temporary software glitches allowed students across the state to access teacher gradebooks on Canvas for almost two hours on Sept. 11.

The errors came as a result of a scheduled software updates at 12:30 a.m. and 11:30 a.m. and lasted a total of 105 minutes, said Devin Knighton, public relations director for the Utah-based company and Canvas creator Instructure. Any student who accessed Canvas in the hour before the update and re-logged on immediately after was able to view and edit the gradebook for their classes.

All changes made were fixed within the day, Knighton said. Because Canvas is not where permanent grades are kept, the Instructure staff was able to access a log and let school officials know exactly what changes were made. [snip]

At USU, 78 students out of the 5,521 active users that day temporarily had access to the modified permissions and only three made changes to grades. Of the students who modified grades, two actually gave themselves lower scores, prompting officials to suspect the changes were experimental, said USU spokesman Tim Vitale.

Devin Knighton from Instructure addressed the issue of preventing future problems.

Utah Education Network has been using the program for two years now, but this problem was a first, Knighton said. He said extra security, such as checks on coding and processes, are being put in place to prevent other major errors but that there’s no foolproof way to prevent errors.

“We can’t promise it will never happen again, because it’s software,” he said.

While Instructure quickly addressed the specific incident identified by Utah, how do we know if they fixed the underlying problem or just provided a patch to fix the immediate symptom? Without the audit, we would have had to take Instructure’s word for it. With the audit, we can rely on in independent expert, Securus Global, to pass their judgement based on penetration testing – we should much higher confidence that the underlying problem was truly fixed.

There are arguments for and against this public form of security audits, which I covered in my analysis post last year. The key issue for me is that the process is transparent. Security issues are not hidden or glossed over with this approach, and the whole ed tech community could benefit from this form of open. Let’s hope that other LMS vendors consider doing the same. If their judgement is that public testing is not the right approach, then they could state their position in a blog post.

[…] Phill Hill has a nice post about Open as in Transparent: Instructure Conducts 2nd Public Security Audit on Canvas LMS. […]