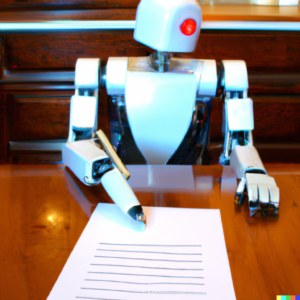

The real threat of students cheating with programs like ChatGPT is not that they’ll get away with it. Rather, the threat is that, in getting away with it, they prove that they are training themselves for jobs that can easily be replaced by an algorithm.

The Catalysts for Competency-Based Learning and Prior Learning Assessments Have Arrived

The combination of rapid shifts in workforce demand and dwindling supply of traditional students is creating conditions that will drive change.

I’m Facilitating a Webinar on CBE on 1/18

A gentle slope to CBE, hosted by Open LMS.

EdTech’s Funding Problems Are Going to Get Worse

The current leg down in EdTech venture investing is caused by problems in the public financial markets. The next one may be caused by problems in the private markets.

I Would Have Cheated in College Using ChatGPT

In a heartbeat. But only under certain circumstances.

Webinar on International Students on 12/7

In the new world, all colleges and universities need to be thinking globally. Come chat with us about why and how.

e-Literate Now Has Text-to-Speech From ReadSpeaker

“Mr. Watson. Come here. I want to see you.”