The debate around net neutrality so far has been almost as depressing as the set of judicial and administrative decisions that got us here. Central to the debate has been the obsession about how the two-speed internet will “stop the next Facebook/Google/Netflix” from being able to innovate.

Save the Internet does a bit better than most at teasing out some of the other issues (privacy, freedom of speech), but states the business core of the argument like so:

Net Neutrality lowers the barriers of entry for entrepreneurs, startups and small businesses by ensuring the Web is a fair and level playing field. It’s because of Net Neutrality that small businesses and entrepreneurs have been able to thrive on the Internet. They use the Internet to reach new customers and showcase their goods, applications and services.

I’m not going to argue that this is wrong. Monopoly power on this scale is a dangerous thing. Until recently, there were decent laws preventing companies from owning all the media outlets in a single metro — we are now moving towards allowing one company to control most of America’s access to the Internet. It’s easy to put on the weary entitlement of “It’s all just Google vs. Comcast, Goliath vs. Goliath, what do I care?” But, of course, this is the well-established point of anti-monopoly law — the world is a better place for David when Goliath fights Goliath than when Goliath stands unopposed. When Goliath stands unopposed, bad things happen. You don’t have to root for Goliath Number Two to understand the utility of that.

At the same time, these arguments have obscured some of the real threats to education that have nothing to do with the “next Facebook” scenario. Primary among these threats is the issue of what happens to traffic that is not from traditional content providers. I’d like to sketch out what that means for higher education, and why your institution should be talking about the dangers of creating a provider-paid express lane on the Internet.

The BitTorrent Roots of the Current Mess and the Problem of “No-Provider” and “Own-Provider” Services

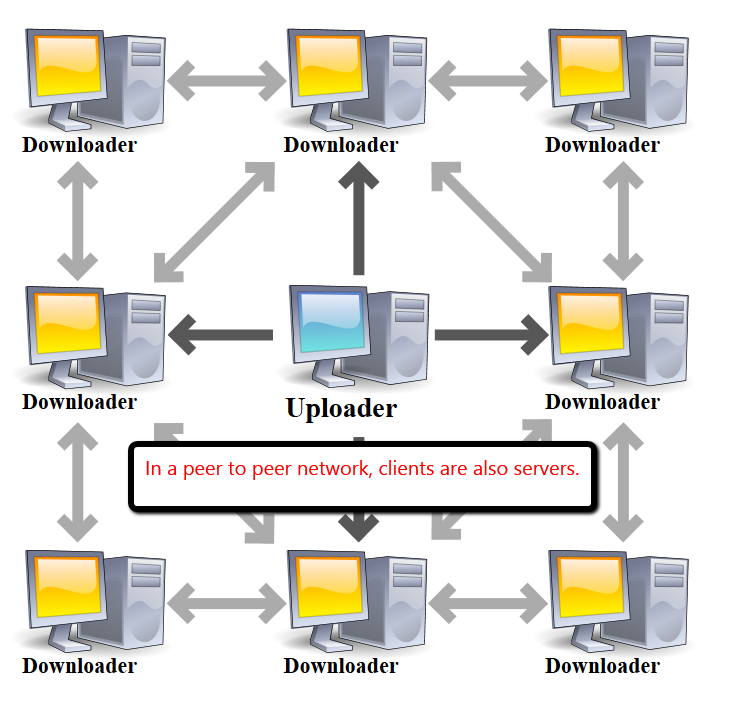

How we got to the current policy is a bit convoluted, but it’s worthwhile to go back to the last great success in the fight for net neutrality. In 2008, the FCC ruled that Comcast had to stop throttling BitTorrent traffic. For those unfamiliar with BitTorrent, it is a peer-to-peer technology that is used to share files on the Internet. Key to its peer-to-peer design is that it is “providerless” — there is no content company that mediates the traffic — all users of a particular torrent connect directly to each other. Your content doesn’t come to me via Google or Dropbox — it comes to me directly from your computer, and from the computers of the others downloading or “seeding” it.

Of course, most campus IT administrators are intimately familiar with the technology, as it was one of the things slowing campus Internet to a crawl several years back. And as such, I’m sure that that at least some campus IT administrators sympathize with Comcast’s decision — after all, a number of campuses ended up throttling BitTorrent as well.

But consider the issue the student who wanted to use BitTorrent faced on such a campus. No matter how much money they paid for Internet service, they could never significantly increase their BitTorrent speeds. Meanwhile, the speeds of everything else provided through campus pipes increased.

Under the new FCC rules, all applications and providers can be subject to the same sort of limits (the newer FCC rules apparently ban throttling, but there is slim difference between throttling and the separation of traffic into fast and slow lanes). The difference here is “content providers” can pay a fee to ISPs to get out of “throttling prison” and use the full bandwidth available to the consumer to deliver their service. So Netflix pays Comcast, and gets out of throttling prison. Netflix’s upstart competitor doesn’t have the money and so gets slower service.

Supporters of the proposal say this is where it ends — it’s just a matter of who owes money to who, and setting up reasonable guidelines for that.

But what about the person using BitTorrent? The problem with BitTorrent is that there is no provider to pay the cable company to get fast lane access. This is not simply a case of how much Goliath owes Goliath. This is a case of David not even having access to the currency system. BitTorrent applications have no content provider status, and so will be relegated permanently to the slow lane.

This problem, that the proposed rules are built around assumptions of a “provider” negotiating with cable companies, is potentially more damaging to education than the actual details of what those negotiations are allowed to entail. To paraphrase Milton Friedman paraphrasing William Harcourt: We are all torrenters now. And that means we have little control over our future.

Beyond BitTorrent: Video Clips for a Media Class

If you think this doesn’t apply to your campus, think again. Because higher education deals quite a lot with services where there is no corporate provider.

First, consider a non-peer-to-peer example. On most campuses, media and communications faculty use clips from films from their class, and quite often distribute them via the Internet. They are allowed to do this because of explicit protections granted to them by the U.S. government, but because they must show care in how they distribute content, they generally use a free standing server on campus (such as Kaltura) to deliver them. In a world where you are studying these clips in, say, a class that deals with cinematography, the quality of the clips could be essential to the activity. As it stands now, what you might say in your course description is “Students should have access to broadband in order to view the video clips for homework.”

So here’s a question — how can you make sure your students at home can get “fast lane” access to these clips?

If you were to put them up on YouTube or Vimeo, then YouTube would negotiate the agreement. But in your case, you just are serving them up through a campus server. Who do you call? How much does it cost?

Ok, now that you have done that for Comcast, it’s time to ask yourself — what other cable internet providers do your students have? If it’s an online course, how do you deal with a local cable provider in Athens, GA when you are in Seattle, WA?

There’s no real answer to these questions. Or rather, the answer is clear — unless you are the University of Phoenix, you aren’t going to be able to negotiate this. Your institution is not set up for it. And so, as the fiber revolution rolls out across the nation, most of higher education will be stuck in the copper lane.

A Peer-to-Peer Example: Educational Videoconferencing

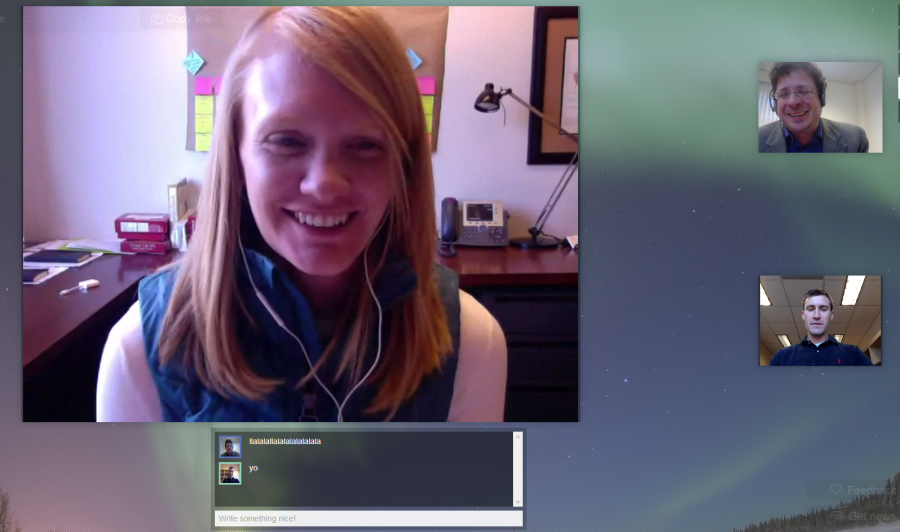

Videoconferencing is one area where the increasing quality of internet connections is poised to have great impact. Most of what sucks about videoconferencing comes down to latency (that ‘you-go-no-you-go’ seconds-long delay that makes you feel like you are conducting class over a walkie-talkie), stability of connection, and visual clarity (which allows you to see the microexpressions that signal to you important things, like ‘Is this student getting this at all?’).

All of these aspects improve with increased bandwidth. And it’s possible, of course, that your third-party video-conferencing provider will be able to pay the fee to Comcast and others that allows your students to tap into to such things.

Assuming, of course, that you have such a provider. The recent trend in video-conferencing is toward peer-to-peer products which connect conference participants directly instead of through an intermediate server. This dramatically lowers latency, leading to a conversational flow that more closely resembles face-to-face discussion. As more remote students have access to high-quality connections, peer-to-peer video conferencing has the potential to increase the impact of online education substantially, and, just as importantly, make such models more humane by providing students and teachers access to the facial “microexpressions” and conversational cues that make such events emotionally meaningful experiences.

Except — how will you ensure access to the bandwidth and latency you need to make this work? Your students can’t buy it — they may have the Comcast Super-Turbo-Extra-Boost plan, but that’s only going to increase the speed of prioritized traffic they receive, such as Netflix.

And your institution can’t buy it either, because there is no central server. When your student Jane from Twin Rivers talks, the traffic doesn’t come from an identifiable university computer. It comes from Jane’s computer in Twin Rivers, and goes directly to you and the five other students in the review session. Jane doesn’t have an option to call Comcast and get her traffic into the fast lane. So while Hulu will be able in 10 years to deliver multi-terabyte holographic versions of The Good Wife to your living room, the peer-to-peer video your campus is using will remain rooted in 2014, always on the verge of not sucking, but never quite making it to the next level.

Other Examples

These are just two examples from areas I’m deeply familiar with, but if you talk other people at your institution, you can uncover other examples fairly quickly. Here’s what you ask:

Is there anything you do in your teaching or research that relies on connections to the Internet and is not delivered by a major third-party provider (such as YouTube, Dropbox, etc.)?

You’ll find out there’s quite a lot of things that work like that. For example, there has been a major push to shut down computer labs on campuses as a cost saving measure — after all, most students have laptops. As we’ve done that we’ve pushed students into using virtualized software, often across consumer connections. In virtualized scenarios, students remotely tap into high speed servers loaded with specialized software. It allows a department to make sure that all students have access to the computing power and software they need without needing access to a computer lab.

There’s a lot of potential for virtualized software to reduce cost and increase student access. While having a good connection to the Internet to use it is costly, its considerably less costly for many students than having to drive to campus several nights a week to complete assignments, and far more convenient. As consumer bandwidth increases, the dream of virtualizing most of the software students need becomes an achievable reality.

Except… You see where this is going. How does your campus make sure that your students virtualized instance gets the maximum bandwidth the student’s connection can support? Failing having a full-time campus cable negotiator, it’s hard to see how this happens. Like the peer-to-peer video-conferencing revolution, the move to virtualization could be over before it has begun, and with it the potential decreases in cost and increases in access.

Once you start to look for this issue, you’ll find it everywhere. There are certain IT functions we keep on campus due to security and privacy issues, for example. We may be pushed into moving these into third party software if we cannot negotiate the same speed for on-campus functions as for off-campus third-party provided functions. Our students are increasingly working with large datasets as part of their research — how, exactly, does one get fast lane access for one’s 50 GB GIS homework?

These are small problems now, but without continued access to top-tier service they can become big problems soon.

But, Chairman Wheeler says….

Of course, the current FCC Chairman says that the fears are overblown. There are many great sites out there that debunk the FCC’s “Don’t Panic” rhetoric better than I could, but let me deal with three common objections quickly.

First, there is some confusion about whether the new rules allow providers to prioritize traffic to consumers. Wheeler says they don’t, but this is a bit of a word game. To vastly simplify the issue, Wheeler has guaranteed that the on- and off-ramps to the Information Superhighway won’t have slow and fast lanes. The actually highway? He’s determined that’s outside the FCC’s purview. And since any connection is only as strong as its weakest link, having no priority lanes on the ramps means very little if providers are carving up the highway into express lanes and economy ones.

Second, he’s guaranteed that providers won’t be able to “slow down” any traffic, only prioritize some traffic. A simple thought experiment demonstrates the ridiculousness of this claim. During peak hours, Netflix currently makes up about 34% of Internet traffic. The cable companies are now going to make Netflix pay to prioritize their content. Given that bandwidth is a finite resource, it doesn’t take a genius to realize the even if the cable companies just went after Netflix it would adversely impact your university’s efforts. By definition, to prioritize one thing is to de-prioritize something else, and in this case that something else is your connection to your students.

Finally, sitting here in 2014, it’s tempting to see the bandwidth and low-latency connections we have now as sufficient for our needs. This is part of the rhetoric of the cable companies. What do you use the Internet for now? Well, you’ll still get to do that!

But how much of what we do now could we do in 2004? Would any of our stakeholders — the students, the legislature, the taxpayers, the businesses we send our students into — be happy with us utilizing the Internet at a 2004 level?

Pundits often complain that the world of education does not adopt technology at the speed of business. That’s true, partially. And we could do better. But the currently proposed FCC rules all but guarantee that we won’t be allowed to.

To me, that’s a bigger issue than where the “next Facebook” comes from. And it’s one that we need to start talking about.

Image Credits:

Peer-to-Peer diagram courtesy of Wikimedia Commons: http://upload.wikimedia.org/wikipedia/commons/0/09/BitTorrent_network.svg. Modified by Michael Caulfield.

“Charles Foster Kane is Dead” GIF, by howtoctachamonster. Published at http://howtocatchamonster.tumblr.com/tagged/citizen-kane

Other images are screenshots by Michael Caulfield.