Back in late July we found out that San Jose State University was pausing their SJSU Plus pilot program using Udacity for-credit MOOCs due to low passing rates. While there was fairly extensive media coverage of the story broken by Inside Higher Ed, there was the promise of a National Science Foundation (NSF) funded research report based on the spring 2013 courses. I described why the research report was important, despite indications that the lessons learned aligned with well-known practices for online education.

With this many known issues, which have also been documented in several excellent blog posts, why is it valuable for SJSU to use the external NSF-funded report?

In my opinion, there will still be value in A) releasing the full data set and analysis, and B) getting others to understand key points.

Many specialists might understand why the program failed, but many others do not. Campus leaders and policy makers need to learn many of the lessons that are already known in ed tech / higher ed community, and SJSU Plus will help in this area. Plus, I wouldn’t discount some unexpected findings (e.g. need for self-pacing in math courses as mentioned in student surveys). [snip]

It is important for some of the new participants (and I would add foundations, state governments, university presidents, etc) to fully understand what worked and what didn’t work in this application of online education. The official report from SJSU will provide a valuable service to help with this learning process.

There is also value in this very public case establishing a precedent of pausing pilots that don’t work, evaluating results early in the process, adjusting the course and support design based on findings, and focusing on student learning outcomes as the primary measure of success.

After several delays, SJSU has now released the full NSF-funded research report on Elaine Collins’ official web page (she was the principal investigator). The full report can be downloaded here.

The research team included the Research and Planning Group for California Community Colleges (RP Group), with team members Rob Firmin, Eva Schiorring; John Whitmer (from RP Group) and Sutee Sujitparapitaya (from SJSU).

The project underlying the report was titled “Experiments in student mentoring, tutoring and guided peer interaction in Massive Open Online Courses (MOOCs)”, with the following description:

This project assesses the effectiveness of human mentorship and guided peer interaction in the context of Massive Open Online Courses (MOOCs). It is based on the observations that

(a) MOOCs are presently more successful for highly self-motivated individuals

(b) there is a nearly complete absence of interactive human mentoring in MOOCs.

This project will investigate the effectiveness of six forms of human mentoring, including group and individual mentoring as well as instructor-guided peer interaction in small groups. In pursuing this project, the PIs seek to characterize the effects of these different types of mentorship on the collection of variables that measure course completion and learning outcomes.

The overall objective of this project is to find ways in which MOOCs can be made successful among a much broader segment of students.

As can be seen from this description, the report focuses on the augmentation of human mentoring to traditional MOOCs, leading to its description of Augmented Online Learning Environments (AOLE). The study addressed three research questions:

1. Who engaged and who did not engage in a sustained way and who passed or failed in the remedial and introductory AOLE courses?

2. What student background and characteristics and use of online material and support services are associated with success and failure?

3. What do key stakeholders (students, faculty, online support services, coordinators, leaders) tell us they have learned?

This is an information-rich 44-page report (plenty to chew on here), but here are the key findings:

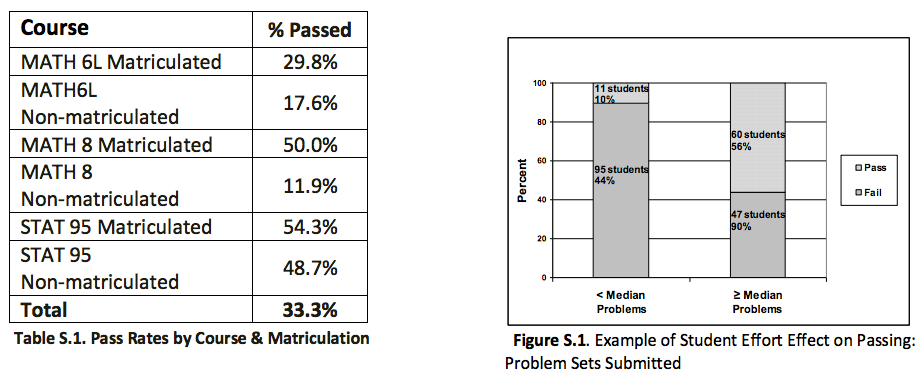

Findings: The research found that matriculated students performed better than non-matriculated students and that, in particular, students from the partner high school were less successful than the other AOLE students. Pass rates varied significantly with course taken and by persistence of student effort as seen in the following table and figure.

The statistical model found that measures of student effort trump all other variables tested for their relationships to student success, including demographic descriptions of the students, course subject matter and student use of support services. The clearest predictor of passing a course is the number of problem sets a student submitted. The relationship between completion of problem sets and success is not linear; rather the positive effect increases dramatically after a certain baseline of effort has been made. Video Time, another measure of effort, was also found to have a strong positive relationship with passing, particularly for Stat 95 students. The report graphs these and other relationships between variables examined by the logistic-regression models and pass/fail.

While the regression analysis did not find a positive relationship between use of online support and positive outcomes, this should not be interpreted to mean that online support cannot increase student engagement and success. As students, Udacity service providers and faculty members explained, several factors complicated students’ ability to fully use the support services, including their limited online experience, their lack of awareness that these services were available and the difficulties they experienced interacting with some aspects of the online platform. It is thus the advice of the research team that additional investigations be conducted into the role that online and other support can play in the delivery of AOLE courses once the initial technical and other complications have been addressed.

Inside Higher Ed has more coverage of the report release, including updates on the timing of the report.

We plan to add a few posts analyzing the report here on e-Literate, so stay tuned.

[…] been thinking a little more this morning about the language used by the researchers in the SJSU Udacity report. They focus a lot on student “effort.” But it’s also pretty common in education […]