Recently I pointed out that the widely-quoted Babson survey on online learning estimates 7.1 million US higher ed students taking at least one online course while the new IPEDS data indicates the number as 5.5 million. After looking deeper at the data, it appears that the difference in institutions (whether or not an institution offers any online courses) is even greater than the difference in students. This institutional profile is important, as the Babson report (p. 13) noted that institutions offering no online courses had very different answers than others, a theme that ran through much of the report: [emphasis added]

The results for 2013 represent a marked change from the pattern of responses observed in previous years. In the past, all institutions have consistently shown a similar pattern of change over time. Different groups of institutions typically reported the same direction of change – if one group noted an improvement on a particular index, all other groups would show a similar degree of improvement. The overall level of agreement with a particular statement might vary among different groups, but the pattern of change over time would be similar. This is not the case for 2013.

As noted above, there was a year-to-year change in the overall pattern of opinions on the strategic importance of online education, and on the relative learning outcomes of online instruction, as compared to face-to-face instruction. In both cases, the historic pattern of continued improvement took a step back for 2013, and all of the changes are accounted for in a single group of institutions: those that do not have any online offerings.

Institutions with no online offerings represent a small minority of higher education – how are they different?

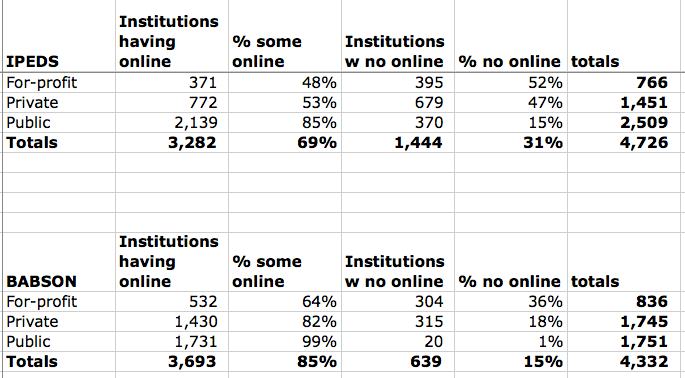

Let’s look at the IPEDS data on institutions versus the Babson data, first by institutional control. I took the data on page 32 of the Babson report and recreated the graph, then I ran the same analysis using IPEDS data. (NOTE: these interactive charts do not come through on RSS feeds, so you probably will have to click through to post to see.)

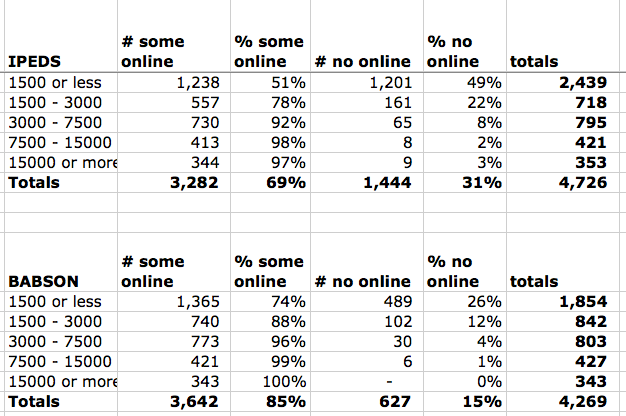

The Babson report also evaluates these institutions by basic Carnegie classification and institutional enrollment. I did not evaluate the former (too messy), but I did run the same analysis by enrollment.

Some notes:

- While I have been able to recreate the universe of 4,726 institutions referenced on page 29 of the report, I cannot get the same total enrollment figure. The Babson data indicates 21.3 million students compared to IPEDS data of 20.6 million. I don’t believe this 3% difference is that meaningful.

- While the Babson data refers to a universe of 4,726 institutions, the data provided is based on 4,332 and 4,269 institutions, primarily by using far fewer for-profit institutions. There is no explanation for these different numbers, but keep in mind that Babson’s data is from a survey that extrapolates to estimate the universe.

- The big difference that should be obvious is that the Babson data shows less than half the number of institutions with no online offerings than the IPEDS data – 15% compared to 31%.

Not only does the IPEDS indicate that twice as many institutions have no online courses as previously reported, but I also question the finding that “institutions with no online offerings represent a small minority of higher education”. 31% is not a small minority.

I am not questioning the research methods of the Babson Survey Research Group nor the value of their annual survey. It is just that we now have a new source of data that must be accounted for. While I do not think the IPEDS data is flawless, it is better than the survey-based data used by Babson. Jeff Seaman, one of the two Babson researchers, said as much in this Chronicle article:

So which number is correct?

The lower one, probably. The Education Department data are more likely to be accurate, “given that they are working from the universe of all schools,” says Mr. Seaman by email. [snip]

The reporting requirements for the department “are such that I would always trust their numbers over ours,” he wrote. “However, I still believe that the trends we have reported for the past 11 years are very much real.”

I hope the analysis I’m doing based on IPEDS data doesn’t come off as nitpicking or attacking the Babson survey. The annual survey has been a very useful source of information, and the trend data as well as attitudinal data cannot be replicated by IPEDS. These Babson reports have enormous influence on the higher education community, being the most widely-quoted source on just how prevalent online education is in the US. It is very important to adjust our thinking based on new information and to be transparent with research data.

It’s kind of amazing that these reports would be the data driving the conversation so long and now that we know they were substantially off, it’s a shrug of the shoulders. It would be interesting to find old quotes that cite the studies and see if the scale of increase was crucial to the quote.

I’m actually not faulting Babson at all, but rather the immediate consumers of the Babson data. Either the data mattered, in which case it’s time for a deep rethink; or the data was just window-dressing on opinion, in which case “data-driven” policy has been a bit of a fraud, and it’s all been “get with the program and here are some scary numbers” nonsense.

I actually think it’s the latter, which is sad.

BTW — I think this work of yours indicates that it’s likely the trends *have* to be wrong as well, at least at a certain level of detail. It’s not just over-counting, but a specific type of over-counting. It might be time to look again at the big 2005 bump? http://www.nbcnews.com/id/15625558/ns/technology_and_science-tech_and_gadgets/t/sharp-increase-online-course-enrollment/#.UuV23hDTm00

I really appreciate what you’re doing here Phil — the question of *in what way* the mis-counting occurred is so crucial to our understanding of what is going on, and no one except you seems to be tackling it.

Mike, thanks for the note, and I agree that there is a big question on the consumption of this information. It’s almost to the point that you have to ask ‘who doesn’t quote the Babson numbers’, and ‘will this change’.

I have some thoughts on the trend data, but so far I’ve held off commenting as Jeff Seaman from Babson (one of the two named researchers) has told me by email that he’s working on a “a more detailed examination”. I think it best if we first get his analysis – hopefully it will come soon.

As a side note, I just went through my email thread with Jeff and noticed that I missed one request of his for some of my data. It’s possible that some of the delay in getting a Babson response could be my fault.

I agreed with that you mentioned in this blog but as per my opinion there should be an system for those who want distance learning is that every university has to adopt distance education department with that anyone who need that may take the advantage of if.