A while back, I wrote a post arguing that algorithm-based ed tech like learning analytics and adaptive learning will not sell well as long as educators do not have the mathematical and scientific literacies necessary to understand the rationale and limitations of the ways in which the software makes evaluations. They won’t trust it because they can’t know when and how it works and when and how it fails. It is as if we are trying to invent the pharmaceutical industry in absence of a medical profession.

Today I will extend that analogy to argue that even revolutionary developments in educational technology will fail to have the dramatic impact (or “efficacy,” in the newly common parlance that was borrowed from medicine) without changes to the fundamental fabric of the institutions and culture of academe. By looking at the history of one particular medical innovation and imagining what might have happened if it had been discovered when the state of medical science and practice looked more like the state of today’s learning science and educational practice, we can learn a lot about how a technology needs to be embedded in a set of cultural and institutional supports in order to achieve widespread adoption, acceptance, and effective use. This has direct bearing on the situation with educational technology today.

An Empathetic View of Pre-scientific Medicine

The anti-microbial properties of the penicillium mold were discovered in 1928 and the modern medicine penicillin was isolated and produced at scale in the 1940s. The thought experiment of this post is to imagine what would have happened if penicillin had been discovered a hundred years earlier, in the 1840s, before many of the institutions of the modern medical profession were in place.

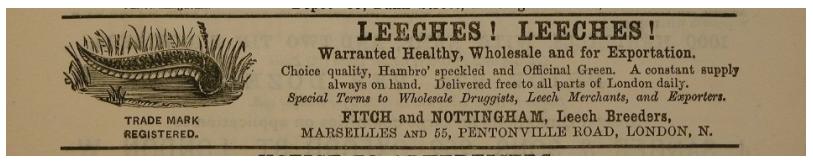

But before we can do that, we need to dispel the myth of pre-20th-Century doctors as ignorant butchers and barbarians. When we see them portrayed in popular culture, it’s usually to evoke horror. What’s the one medical prescription that you’ve seen portrayed most often in the movies and on TV? I bet it’s leaches. And usually in the context where we see the treated character is dying and we are meant to be horrified by the crazy quackery of the treatment. Leaches and amputations are the stock and trade of Hollywood portrayals of medicine before about 1930 or so. And that is not an entirely inaccurate picture of popular treatments during these times. But it is badly out of context.

As a whole, early medical practitioners were no less intelligent, empirically minded, compassionate, or dedicated to healing than their modern counterparts. For most of human history, being a healer could be a very dangerous profession, since many communicable diseases that are treatable and no big deal today were fatal and more prone to spread back then. Even as recently as the 19th Century, deadly epidemics of cholera, scarlet fever, influenza, anthrax, and many others were commonplace. Tuberculosis was so prevalent that it not really an epidemic so much as a fact of life. Infection rates in cities could be close to 100%, and perhaps as high as 40% of working-class mortality was due to turburculosis in some urban areas. To be a medical practitioner in 1840 was to expose yourself to life-threatening disease on a regular basis.

Nor were their medical beliefs as crazy and disconnected from empirical reality as they are often portrayed today. Illness is very often occompanied by bodily secretions. Sweat. Mucous. Snot. Vomit. Pus. Diarrhea. Blood. We are largely insulated from these secretions in the modern world through a combination of medicine and sanitary aids, but they were out in the open and ubiquitous throughout most of human history. Since there was a high correlation between these secretions and illness, and since the practitioners at the time had very limited empirical tools to work with—biological elements that were smaller than the human eye were effectively invisible before the 1600s, and dissection of cadavers was illegal in many cultures through much of human history—it was logical for these practitioners to think about these fluids and their balance within a healthy or unhealthy body. The theory of vital fluids, or “humors”, goes back at least as far as Hippocrates in the 5th Century BC and probably as far back as the ancient Egyptians.

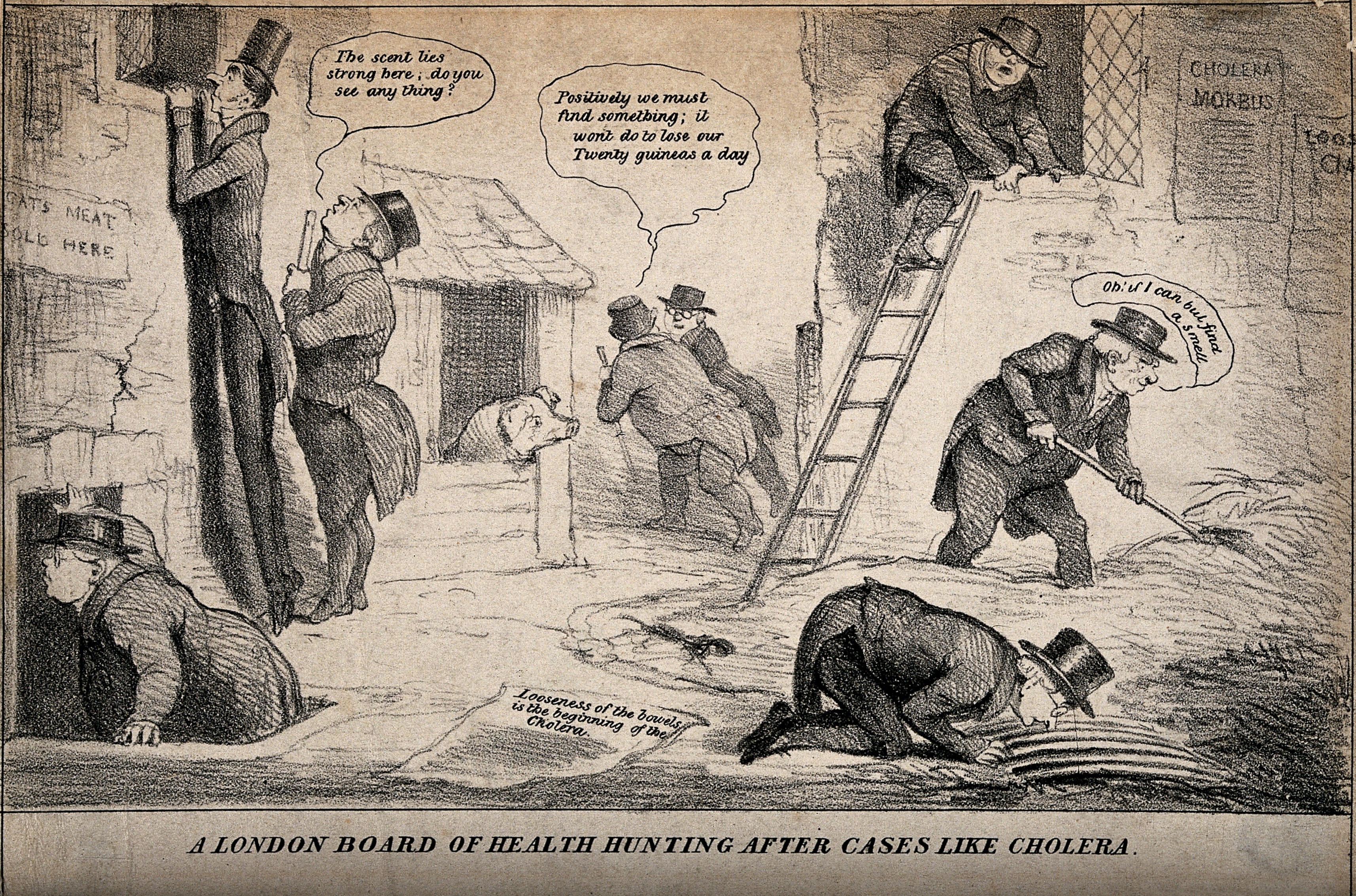

Another fact of illness from which we are largely insulated in the modern world is that it stinks. Literally. All those bodily fluids smell bad. And as they sit around for a while, which they are bound to do in any civilization that does not have, for example, a modern sewer system, they will rot and smell increasingly like, well, rotting dead bodies. A smell which was also much more familiar to every-day people in their every-day lives, even as recently as the 19th Century. This was particularly true in poor, urban areas, where entire families could be wiped out by a cholera attack in a matter of days and where residents often lacked the funds to afford a burial. These were also places were human (and animal) excrement built up. The toilet may have been invented in the 19th Century, but in the first decades of its use, it was not connected to a sewer system. Rather, it typically flushed to a cesspit—basically a big ditch—in the basement of a house. There was a whole industry of people who would regularly come and clean the cesspits. Or not so regularly, especially in poor, densely populated urban areas where the landlords had little incentive to keep up with maintenance and sanitation. It was very common for these cesspits to overflow, to the point where basement floors in houses in poorer neighborhoods were covered in a layer of human shit several feet thick.

The point is that sickness smelled, it smelled like death, and areas where widespread sickness and death were likely to be most common and out in the open—densely populated poor urban neighborhoods—stunk to high heaven. This was as likely true in ancient Athens as it was in Victorian London. As a consequence, the theory that sickness was transmitted by bad air, or “miasma,” is about as old as the theory of humors. These ancient healers correctly observed correlations but did not have the knowledge and tools they needed in order to tease out causation. That limitation was not substantially different in 1840 than it was in Hippocrates’ time. Perhaps this is why the basic theories of illness—humor imbalance and miasma—had not changed dramatically in 2000 years in the Western world.

There were genuine medical innovations during these times—herbal treatments and home remedies by individual practitioners that were passed on through informal networks, often from parent to child. We are still rediscovering the wisdom in some of these treatments. But they were not grounded in a deeper understanding of medicine. They were a collection of accidental discoveries and individual experiments, accumulated over time, occasionally codified but never fully understood or ubiquitously shared. And there was no way to definitively establish which remedies genuinely worked and which ones were nonsense grounded in misunderstanding and supported by what “evidence” luck or coincidence could provide.

Educators in 2017 have very similar limitations to these Victorian healers. Many of them are passionate, dedicated professionals who make real sacrifices out of a genuine desire to help others. They try their best to be empirical and develop theories, like learning styles or constructivism, that are based on their observations in every-day practice and the correlations that they observe. But they usually lack scientific tools and training. To make matters worse, they also lack the clear and proximate outcomes that Victorian doctors had. Death and disfigurement are easy to see. Failures of education are often not as obvious and may take years or even decades to fully manifest. At their best, educators are most often folk healers who have accumulated what knowledge they can, one bit at a time, adding common sense and powers of observation where possible, but whistling in the dark more often than any of us would like to admit.

The Miracle of Penicillin

There is one more piece of context we need before we can get to our thought experiment about inventing a pharmaceutical industry in absence of a medical profession. Since penicillin will be our example in this exercise, we need to understand just how big a deal it was. Today it is one drug of many in our pharmacopeia and has been supplanted in many cases with newer drugs. We understand there are many medical problems it won’t solve and that treating it like a panacea can have bad effects (like antibiotic-resistant diseases, for example). That’s a good frame for thinking about the potential and limits for any educational technology to improve outcomes. Ed tech tools should be thought of as treatments for specific problems under specific circumstances with specific limitations, much like penicillin and other antibiotics.

We all understand that much about penicillin because it’s part of our lived experience. But that’s only half the picture. The other half is how much penicillin changed modern life in ways that we take for granted. A treatment doesn’t have to be a cure-all in order to be a miracle cure.

As I mentioned, fatal illness was commonplace in 1840. Life expectancy in England was roughly 40 years and close to the same in the United States. Much of the early mortality was due to diseases that treatments like penicillin have been either effectively eradicated or reduced to nuicances. For example, when you think of streptocccus, you probably think of a sore throat your kids get that causes you to have to keep them out of school for a few days and make a trip to the pharmacy. But some strains of the streptococcus bacteria can be deadly. In 1911, a strep outbreak caused by contaminated milk in Boston left about 1,400 people sick and 54 dead.

Streptococcus also causes scarlet fever. In the 1840s, scarlet fever was one of the most common fatal infectious childhood diseases in urban Europe and the US. It was more common than the measles and had as high as a 30% mortality rate. Puerperal fever, also known as childbed fever, was another lethal and particularly cruel streptococcus-caused disease. It struck mothers of newborn children and often caused a painful death. The average infection rate for mothers in the 1840s was between 6 and 9 for every 1,000 deliveries, but frequency could be as much as 10 times higher during bad outbreaks and, ironically, in hospitals, where the disease was spread by doctors who did not yet understand the importance of working in a sterile environment.

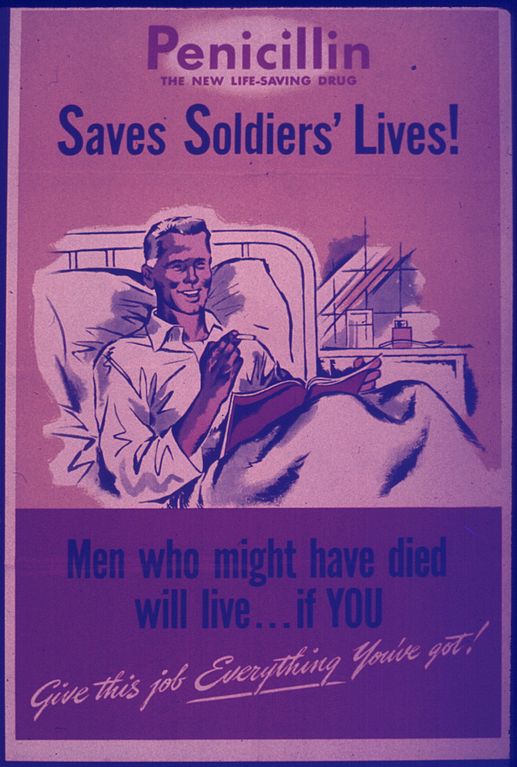

And that’s just strep. Penicillin can treat a range of other deadly or crippling diseases, like pneumonia, staph infections, listeria, and a variety of post-surgical or wound-related septic conditions. (One of the drivers for the perfection of penicillin production in the 1940s was to treat infections on the battlefield in World War II.) Chlamydia. Typhoid fever. The list goes on. Even though penicillin plays a very small and unobtrusive part in our daily lives, or even our healthcare experiences, it absolutely transformed modern health and life.

One could imagine certain kinds of adaptive learning technologies playing an analogous role in education. Any teacher with even a modicum of self-awareness knows that, no matter how good she is, she inevitably fails to get individual students to learn on a daily basis. In almost every class of every course, there is probably some student who is missing something. That something might turn out to be inconsequential, or it might be vital building block that will make or break future academic success. And we often don’t know why we’re failing or even when we’re failing. It’s easy to imagine some form of adaptive learning technology preventing some of those mishaps, turning what might have been a dropout-inducing failure into a short-lived detour and maybe an annoyance for an otherwise successful student.

To be clear, I’m not claiming that any solution on the market today is effective in this way. We just don’t know. But penicillin is probably a good analogy for the kind and the magnitude of the breakthrough that we can aspire to as a best case for an educational technology like an adaptive learning product. Even in the best case, it would no more replace the educator than penicillin could replace a doctor. But it could radically reduce or even eradicate certain kinds of classroom failures when prescribed correctly.

So now we have all the pieces in place for our thought experiment. Educators today can be thought of as being roughly like healthcare practitioners in the 1840s. (I will continue to develop this part of the analogy going forward in this post.) Our best hope for adaptive learning or similar algorithmic-based technologies is that they promise an improvement on the level of penecillin in the 1940s.

What would happen if you were to try to introduce the 1940s invention into 1840s medical culture? What happens if you try to introduce modern pharmaceuticals into a world without a modern medical profession?

Physician, Heal Thyself

The road from 1840 to 1940 is the road from miasma and humors to germ theory. In order for penicillin to take hold in medicine as an efficacious and widely adopted treatment, physicians had to believe that many diseases are caused by bacteria that are too small to be seen with the naked eye, that there is a reliable standard of proof linking causality between a particular bacterial strain and a particular disease, and that the key to understanding and eliminating a disease is understanding how the bacteria are transmitted and how they can be killed.

Communicability

The first step is to believe that diseases are communicable. Miasma, the idea that the air itself causes illness, is not the same as the belief that disease can pass from patient to patient through the air. What we will find with many of these steps is that, while the necessary new idea had been around in some form for a long time, it failed to take hold until the conditions were right for the medical community to be open to the “new” idea. In the case of contagion, the physician who is most widely credited with first suggesting something like communicability was Italian Girolamo Fracastoro in 1546. Building on an alternative concept of disease that was almost as old as miasma, he argued that there were “seeds of disease” that could be passed from person to person via breath or the bloodstream. In 1722, Englishman Richard Mead argued that the pneumonic plague, at least was communicable and, building on Croatian and Italian practices of isolating plague victims dating back to the 1300s, argued for quarantine.

But neither man was successful at changing the dominant model for understanding disease. A century later, the argument that diseases are communicable was still controversial. In 1843, Oliver Wendell Holmes, Sr.—father of the more famous Supreme Court justice—published a treatise arguing that puerpural fever is contageous in what has since come to be known as the New England Journal of Medicine, the oldest medical publication in the United States (having been first printed only 32 years earlier in 1811). Dr. Charles Meigs, head of obstetrics in Philadelphia’s prestigious Jefferson Medical College, was one of the more prominent critics of Holmes’ theory. In 1855, Meigs was asked to investigate an outbreak of the disease connected to one Philadelphia doctor. In one three-month period, 37 of his patients contracted the disease and 15 died from it.

Meigs concluded it was a coincidence. He could not believe that one of the pillars of medical understanding was wrong.

This set off a highly public ongoing argument between Meigs and Holmes, which Holmes ultimately won. One of the big influences on the outcome of this debate was an investigation into the devastating cholera epidemic the previous year in the crowded Soho district of London by English physician John Snow.1 Through careful detective work, Snow was able trace the path of the outbreak back to Patient Zero—a baby who had contracted the disease from elsewhere. It turns out that the overflowing cesspit in the tenement where the family was living was directly adjacent to a well that fed a popular public water pump. The diapers of the infected baby were thrown into the cesspit, where they then contaminated the water supply. Snow, a proponent of germ theory, argued that a germ was being transmitted through the water. He advocated that the city should remove the handle from the infected pump and that area residents should boil their water. Once these steps were taken the epidemic subsided.

This work was, in fact, similar in nature to the earlier detective work of Oliver Wendell Holmes. A method was beginning to develop that marked the beginnings of modern epidemiology. (It also marked the beginnings of modern sanitation. Though it would take a while, Snow’s study was influential in the eventual decision to construct London’s sewer system.)

Germs

Snow’s discovery was influential in convincing the medical community that diseases can be communicable and spread through water, but he didn’t have conclusive proof that bacteria caused cholera. As with communicability, the…uh…germ of germ theory was not new. The ancient Greek historian Theucydides, who ironically died the year that Hippocrates was born, argued from observation of a plague in Athens that the disease appeared to spread from person to person. Following this, a Greco-Roman rival theory to miasma developed in the idea of external “seeds” that carry disease. A little less than two thousand years later, Signore Fracastoro’s argument was really an extension of that theory. But it was abstract and didn’t have the same intuitive, observation-based explanatory power of miasma and humors. It didn’t take hold.

That began to change in the 1600s, when microscopy developed to the point where bacteria could be seen. Dutch microscopist Antonie van Leeuwenhoek first observed bacteria (which he called “animalcules”) in 1676. There were several scientists in the 1700s who argued that these tiny organisms could cause disease. They were not taken seriously by their contemporaries. But at least there was proof they existed.

By the way, many of these medical and scientific debates took place on the editorial pages of general-interest newspapers. The medical journal was a 19th-Century invention. Twelve years after the founding of the New England Journal of Medicine, the Lancet became the first general medical journal in England in 1823. By 1840, there still weren’t a lot high-quality outlets for cutting-edge medical discussions. So many debates of the day took place in the press. Arguments for boiling water to prevent the spread of cholera might appear side by side with a discussion of depriving patients of fluids (when it would later turn out that one of the most effective cures for cholera is rehydration) and advertisements for Smith’s Castor Oil cure-all. And the “medical” articles would often be published by people with no scientific training. There was no legal requirement for any degree or certification whatsoever in order to practice medicine.

Before you get too comfortable in your sense of superiority of the modern world, consider that, today, there are no legal requirements or even norms for professors to have any training in teaching, and that many articles about pedagogy and even effectiveness “study” results appear in non-peer-reviewed trade magazines or even the popular press, right next to product advertisements.

Putting it Together

When John Snow made his discovery of cholera in the well in 1854, he was able to see the cholera-causing bacteria in the water and in the patients. This was highly suggestive. But it wasn’t conclusive. One of the revelations that came with the microscope are that bacteria are everywhere. Just because certain bacteria where coincident with a disease did not prove that they caused that disease.

Nor did the pioneering work of brilliant and prolific French chemist Louis Pasteur provide proof positive. In the late 1850s, Pasteur conducted a series of experiments showing the role that yeast—microorganisms—play in fermentation. This began to show a mechanism by which the micro world could affect the macro world. In 1865, he patented pasteurization, having proven that bacteria do not grow in sterile conditions. Among other things, this provided more theoretical backing to John Snow’s recommendation to boil water as a way of reducing the transmission of cholera.

Speaking of which, Pasteur also developed a vaccine for chicken cholera using a weakend strain and proved that chickens given the vaccine had developed immunity to the virulent strain. So this was yet more evidence that specific bacteria cause specific illnesses and interact with our bodies in complex ways.

But it still wasn’t absolute proof positive. Opinion in the medical community was beginning to shift, but the millenia-old predominant theories of medicine had not yet been universally overturned.

The final discoveries that would prove to be the tipping point for germ theory came from a German physician who was Pasteur’s arch-rival: Robert Koch. His early work was on anthrax. Today we think of it as a weapon that is created in a lab, but as recently as the 1870s it was a commonly occurring and somewhat mysterious natural disease, often affecting cattle. It would appear for a few years, grow strong, then disappear altogether for while, only to reappear in the same pastures some years later. Koch identified the particular bacterium that causes anthrax and showed how it could lay dormant in the soil (like, say, in the decaying bodies of cows that had died of the disease and been buried in the pasture) for years and still be infectious. More importantly, in 1890, he and a colleague published a four-point method, known as Koch’s Postulates, for demonstrating the causal connection between a microorganism and a disease:

- The microorganism must be found in abundance in all organisms suffering from the disease, but should not be found in healthy organisms.

- The microorganism must be isolated from a diseased organism and grown in pure culture.

- The cultured microorganism should cause disease when introduced into a healthy organism.

- The microorganism must be reisolated from the inoculated, diseased experimental host and identified as being identical to the original specific causative agent.

This is essentially the invention of modern scientific method for microbiology.

All of the developments I described in this section took place after 1840. There are also other relevant late-19th-Century developments, like the invention of antiseptics, the proliferation of and competition among medical colleges, and the growth of international medical conferences. And there were critical early 20th-Century developments, like rigorous requirements for medical licensure. These developments all paved the way for the discovery, refinement, and acceptance of penicillin.

Given all that, how would the medical establishment of 1840, such as it was, have responded to a claim that a common mold could cure scarlet fever, childbed fever, post-wound infections, and a host of other deadly ailments?

Probably about as well as they would to literal snake oil and not as well as to leeches or fresh air cures. It likely would have been lost in the noise. In fact, moldy bread was sometimes used to treat infections in ancient Greece and Serbia. Like the quarantine efforts during plague outbreaks, the treatment’s success did not protect it from relative obscurity.

Efficacy and Social Infrastructure

It’s easy to tell the story of penicillin as a story of scientific innovation building upon other scientific innovations. But the more important point from the ed tech perspective is that the real-world efficacy of an innovation is heavily dependent on the social system and communication channels through which the innovation is evaluated, accepted, and diffused. This is classic Everett Rogers stuff.

Let’s review some of the changes that had to happen in the medical social system in order to make penicillin possible:

- There had to be a critical mass of researchers working on the same or adjacent problems and developing a shared understanding of the problem space.

- Those researchers had to have trusted channels of communication like peer reviewed journals and international conferences where they could share findings and debate implications.

- The research community had to develop a set of shared research methods and standards of proof.

- The practitioner community and the research community had to be so tight in their identities that they were hard to separate. Physicians had to see themselves as men of science. (They were mostly men at that point.)

- Any innovation has to fit with other co-evolving innovations and processes (such as antisepsis and public health infrastructure).

- The fusion of the research and practitioner communities entailed a requirement for practitioners to be thoroughly trained and certified in the science before they were allowed to practice.

How much of this exists in education today? Very little. There are plenty of researchers, but they are fragmented across multiple communities. Those communities often don’t speak the same theoretical language, don’t share research methods or standards of proof, and don’t communicate with each other very often or very well. One of the many manifestations of this problem is the proliferation of meta-studies with null results. These efforts to find conclusive evidence for the efficacy of an approach are often little more than paper towers of Babel. Because the studies being compared are not conducted using common practices and standards of proof, there is just too much noise caused by the differences among the experiments to be able to tell anything.

But the bigger problem is that there is an enormous gulf between researchers and practitioners. Not only do most professors receive zero training, many of them are actively discouraged from thinking that studying educational research or even improving their craft as classroom teachers is worth their time. Far from being vital to their career advancement, they are taught that it is a distraction.

If somebody were to invent the educational technology equivalent of penicillin today, I have very little confidence that it would get wide adoption or broadly effective application. The social structures simply aren’t there to enable such an advancement to be recognized, fit into current educational practices well enough to seem sensible to and adoptable by practitioners, and get woven into the fabric of practice as part of the accepted knowledge of educators everywhere.

Nor is this something that could be “Uberized” through the magic of machine learning. The practitioners are not a bug in the system; they are a feature. The state of 21st-Century learning sciences is strikingly similar to that of early 19th-Century medicine. We are studying complex processes that we largely cannot see. When we do develop tools that give us visibility, we often lack the theoretical structure—the germ theory, for example—to make sense of what we are seeing. Many of the bits we are learning, we don’t know how to apply yet, and much of what we know how to apply is disconnected from our still-blurry picture of how learning works. While we have early glimmers of understanding into the basic biological processes of learning and a rudimentary grasp of the sciences for understanding macro-level educational processes—think microbiology versus public health, with epidemiology somewhere in the middle—we are very far from figuring out how all of those pieces fit together into a system of knowledge that can drive the design of our system for education.

We’re not going to cross these major horizons in human understanding by repurposing some stock-trading or ad-selling or even brain-scanning algorithm. The creation of a system of education that is as deeply grounded in scientific inquiry as our system of medicine is today will require human inginuity. Lots of it. We don’t need to get rid of educators or to get them “out of the way.” To the contrary, we need to recruit them into the scientific effort. We need curious, ambitious, empirically-minded physicians of the classroom who can infuse their commitment of care with science and their science with a humane commitment of care. And we need them to be talking to each other and learning from each other.

There are lots of ingenious people working in education. But at the moment, they are mostly working on their own or in small groups with little ability to get the attention of the large population of professional educators who could test and refine their innovations, rendering them practically useful and efficacious. Despite the insights being gained here and there in laboratories, classroom studies, and informal daily experiments of lone educators, we do not have the kinds of social structures in education that enabled the breathtaking progress of 19th- and 20th-Century medicine. We know from experience that isolated pockets of humans can be aware that moldy bread cures infections without that awareness leading to society as a whole getting anywhere close to “discovering” penicillin for literally thousands of years. Mass communication didn’t change that; the advent of the newspaper did not guarantee that the medical information it spread was any better than (or even as good as) grandma’s best home remedy. We are not going to tweet, blog, or Facebook our way out of this rut.

I see only a few very early signs that we are developing the social infrastructure for empirically-driven progess in education. Until we do more, I have little hope that learning sciences or educational technologies will lead to any big breakthroughs in improving educational outcomes.

- “You know something, John Snow. [↩]

I think your analogy is a little strained, though you do hit the nail on the head towards the end. The real issue is siloed information on best practices and almost no sharing of curricular materials beyond the teacher down the hall.

I remember reading a book of chess puzzles and in the foreward, the author explained how the field of chess puzzles blossomed only in the late 19th century with the advent of rapid mail delivery. Before, every enthusiast was likely the only person in a several mile radius who enjoyed such puzzles and the isolation severely limited the give and take that creates a shared body of knowledge and practice.

I disagree. This isn’t just about dissemination. It’s also about literacy and shared assumptions. Sharing of curricular materials does not solve these problems. Conversely, these problems make sharing of curricular materials less valuable and therefore less likely to happen because educators who might adopt curricular materials designed by somebody else don’t necessarily share the same assumptions about good teaching and course design.

I understand your point, but I think that more and consistent sharing is the only way to drive consensus on what is good pedagogy. I can’t see everyone coming to agreement in principal and only then agreeing to share their materials.

Every time I try to bring up sharing of curricular materials with my teacher friends, nearly all of them respond that their particular situation, collection of students, course requirements, etc. are unique. I think that sort of hubris is severely curtailing the advancement of the field.

I think that’s the real analogy to earlier medicine. In the absence of strongly supported facts, every small town doctor developed their own explanations and treatments.

Your analogy makes my point. Why would small town doctors swapping treatments convince each other that the explanations of whether and why those treatments should work are valid? “Facts” are agreed upon things. Everyone “coming to agreement in principle” is also known as shared understanding, which is supported by “facts” that are validated through agreed upon methods. Do you all believe in the effectiveness of continuous formative assessment and share a definition of what it means? Do you share the belief that the effectiveness of the technique has been proven based on reasonable standards of proof? If so, then your curricular materials that are designed to support continuously formative assessment are more likely to be adopted by your peers. Lacking that shared understanding, they will not.

I really appreciate the work you put into all of your essays. This one was particularly compelling. I think your conclusions are correct, too. I am of the opinion that we have reached peak efficacy for ed tech and are passed it. Whatever moldy bread there is has been used in some classroom somewhere and the signal it sent was indistinguishable from the noise. Give it a couple thousand years? The systems at play are so complex and the incentives are hazy. The Protagonists are flawed and often have an inaccurate and definitely uncompassionate view of the luddites, who range from passionate and sincere and intelligent to disconnected and uninterested, to, probably, antagonistic. The anti-“elitest” view that has grown louder and louder breeds a reasonable defensiveness on the part of professors; without the deference that the 19th century physician was given in a community, would he ever have been able to see where he was wrong?

In various places above I would have done well to include scare quotes. “Peak efficacy,” and “ed-tech,” in my comment, both refer to current overvalued fixes for contemporary issues that will require much larger systemic change unlikely to come from tighter application to either quarterly OKRs or quarterly peer reviewed recommendations. Implicit in my comment is a belief that there is a literally “better” way to be found. So in the long run we aren’t, by definition, at peak efficacy.