To paraphrase the intro paragraph from January’s post on the George Mason University report, another year month and another deeply flawed report about online education in US higher education, this time by Di Xu (assistant professor of educational policy and social context at the University of California

Irvine and a visiting fellow at AEI) and Ying Xu (Ph.D. candidate at the School of Education at the University of California Irvine). The report is titled “The promises and limits of online higher education: Understanding how distance education affects access, cost, and quality”.

While the supply and demand for online higher education is rapidly expanding, questions remain regarding its potential impact on increasing access, reducing costs, and improving student outcomes. Does online education enhance access to higher education among students who would not otherwise enroll in college? Can online courses create savings for students by reducing funding constraints on postsecondary institutions? Will technological innovations improve the quality of online education?

This report finds that, to varying degrees, online education can benefit some student populations. However, important caveats and trade-offs remain.

In many ways this report takes a similar approach to the GMU report and a prior one by Caroline Hoxby from Stanford University, which was subsequently withdrawn, in asking important questions but providing flawed analysis to support conclusions. The problems with the American Enterprise Institute (AEI) report lie in its description of the history of online education and the 50 percent rule, the usage of data to describe the “supply side” of online, and some misinterpretations of IPEDS data. The flaws are hard to overlook, which is a shame, in that much of the qualitative discussion on online education provides a nuanced set of answers to the questions posed above.

The Good

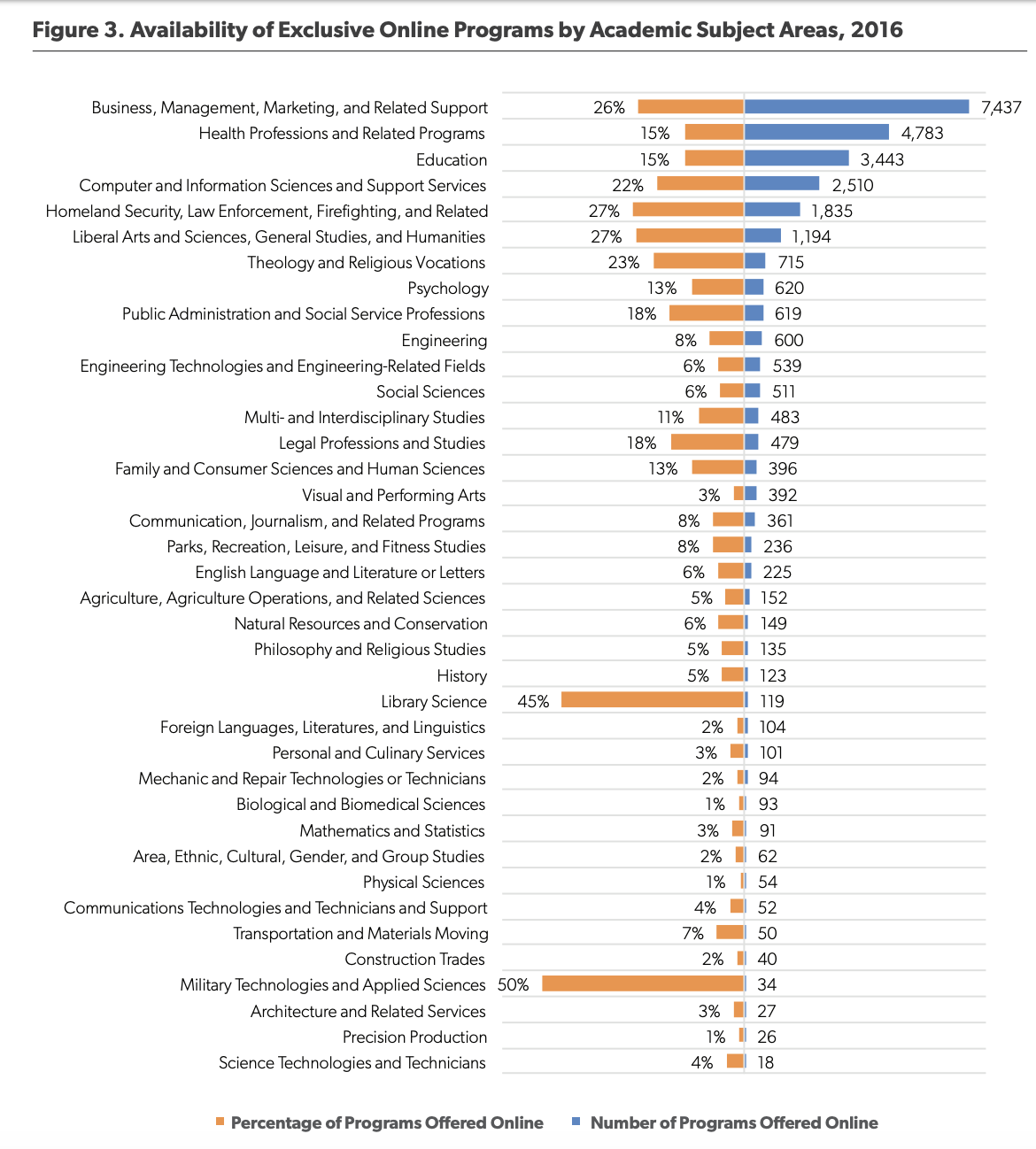

The AEI report takes a look at a little-used portion of the IPEDS data set – The Completions survey and its program-level data on whether an institution offers certain programs at all and whether they are offered as a fully online (distance education) offering. This data has its flaws, which we’ll get to below, but it was quite interesting to get a summary view at the program level.

After a relatively solid discussion of research findings on Online Education and Student Outcomes, which summarizes positive and negative results along with the context and limitations of the relevant research, the report presents its discussion of known strategies to improve online education. This is a welcome relief, as many studies view online as a conclusion to be made about online vs. face-to-face, while this one summarizes known methods to continue improvements of a necessary modality.

Based on the growing knowledge regarding the specific challenges of online learning and possible course design features that could better support students, several potential strategies have emerged to promote student learning in semester-long online courses. The teaching and learning literature has a much longer list of recommended instructional practices. However, research on improving online learning focuses on practices that are particularly relevant in virtual learning environments. These include strategic course offering, student counseling, interpersonal interaction, warning and monitoring, and the professional development of faculty.

The Bad

The introduction relies heavily on the “50 percent rule” and 1998 and 2006 changes to this rule as key points in the expansion of online education. This regulation did have an effect, but so did a number of other factors not mentioned in the report. To make matters worse, the wording of the rule conflates students and institutions. For example, in an email conversation with Russ Poulin from WCET, he noted how the following is inaccurate:

Similarly, the HEA also denied access to certain types of federal financial aid and loans for students who took more than half their courses through distance courses.

yet this statement is accurate:

…the rule dictated that institutions that offered more than 50 percent of their courses through distance education or enrolled more than half of their students in distance education courses would not be eligible for federal student aid programs.

The regulation applied to institutions and in no way measured this usage at the student level. I find that this article from New America does a much better job describing this regulation’s history and impact.

Update 3/8: Poulin also noted (see comment below):

After talking to you Phil, the oddity of the 50% discussion being front and center hit me even more. The lifting of the 50% rule had an impact on only a small number of institutions. Several for-profits and a small number of non-profit and public universities. The vast growth in distance learning has primarily been in institutions that get nowhere near the 50% mark, so the change in that rule was not a direct influence in their decision to enter the distance education market. To place it front and center seemed odd to me and not a real reflection of the motivations for most college leaders.

The report also confuses institutional vs. student level data in looking at per-state online statistics.

Finally, considering that state-level policies may shape online learning in unique ways, Figure 13 shows online enrollment by state in the 2016–17 school year. Unsurprisingly, the most populated states, such as California, Florida, and Texas, also had the largest number of online course takers. Once accounting for between-state differences in overall higher education enrollment, four states have the largest share of students who enrolled in at least one online course in 2016: Arizona (61 percent), Idaho (52 percent), New Hampshire (58 percent), and West Virginia

57 percent).

This might be nitpicking, but the IPEDS data referenced is for institutions located in each state, not students located in each state. But a report trying to make sense of a complex subject should get this information correct and not add to the confusion.

The Ugly

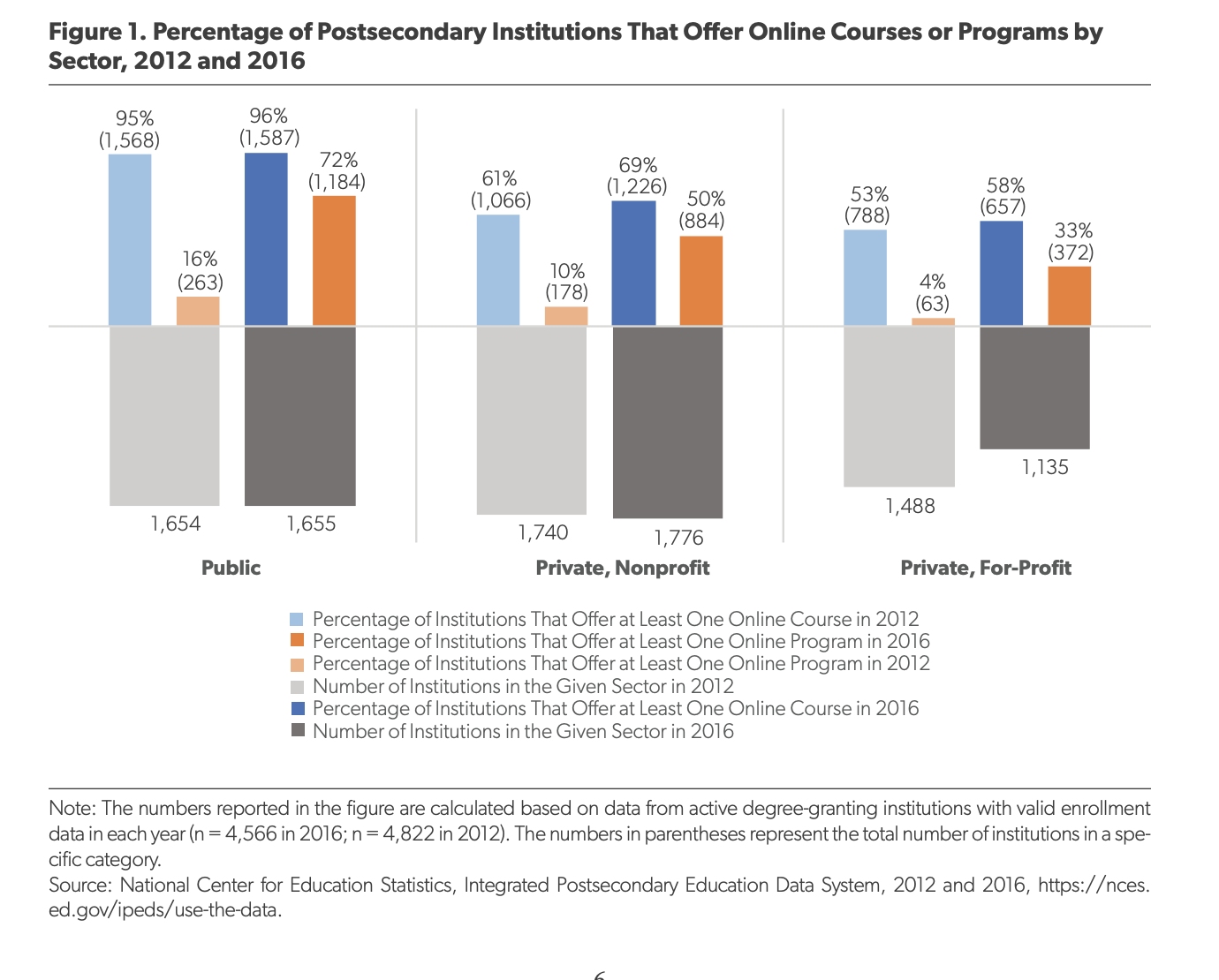

The worst aspects of the report can be seen in figure 1 and an attempt to summarize changes in the supply side of online education. The authors chose to define the supply side as number of institutions offering at least one online course or one online program, using the aforementioned Completions / program-level data.

When I shared this image on Twitter, Kevin Carey pointed out some results that seems non-sensical.

Wait, so from 2012 to 2016, the percentage of public institutions offering at least one online programs went from 16% to 72%, whereas at for-profits it went from 4% to 33%?

— Kevin Carey (@kevincarey1) March 5, 2019

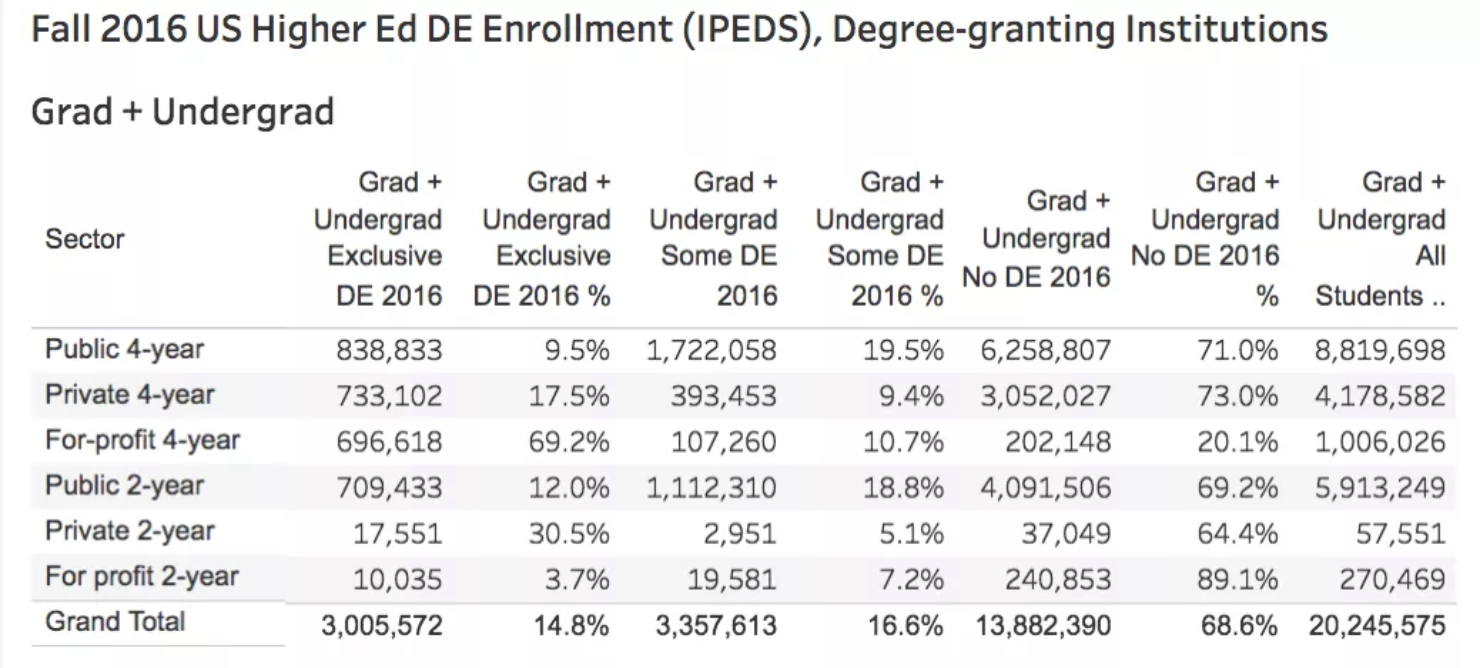

The GMU report and the Stanford / Hoxby report made the more common mistake of essentially conflating online education with the for-profit sector, but this data makes little sense on the surface – implying that the for-profit sector offers relatively few online programs compared to public and private institutions. Looking at our 2016 IPEDS profile, you can see that 4-year for-profits by far have the greatest percentage of students in fully online programs (69%). How does AEI measure for-profits as much lower in offering fully-online programs?

It took a while to figure out, but I think the authors made two mistakes. One is that they combined all for-profits together (2-year and 4-year), which is confusing since 2-year for-profits have the lowest usage of online education and a bunch of really small schools. This combination cuts the for-profit numbers dramatically. Look at the 2012 summary data below, where I show data for each sector and then combining 2-year and 4-year sectors together for public, private, and for-profit.

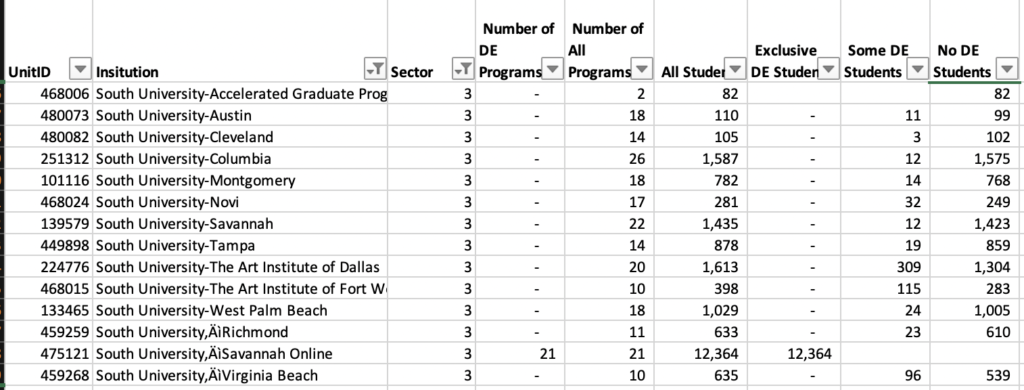

The second issue is that simply measuring for-profits by institution using Completions program-level data is an unreliable approach to understanding online education supply, particularly for the for-profit sectors. Most for-profit systems own a number of smaller campuses, each with their own IPEDS code, yet the online programs are offered centrally by the system. And the Completions survey DE data has major holes in it. Consider South University (part of EDMC as of the 2012 data shown below):

All 21 online programs are offered through the online campus, with over 12,364 taking exclusively DE courses and 8,898 taking no DE courses. Using the AEI methodology, 13 of the 14 institutions have no online courses or programs – almost no supply of online education in their language.

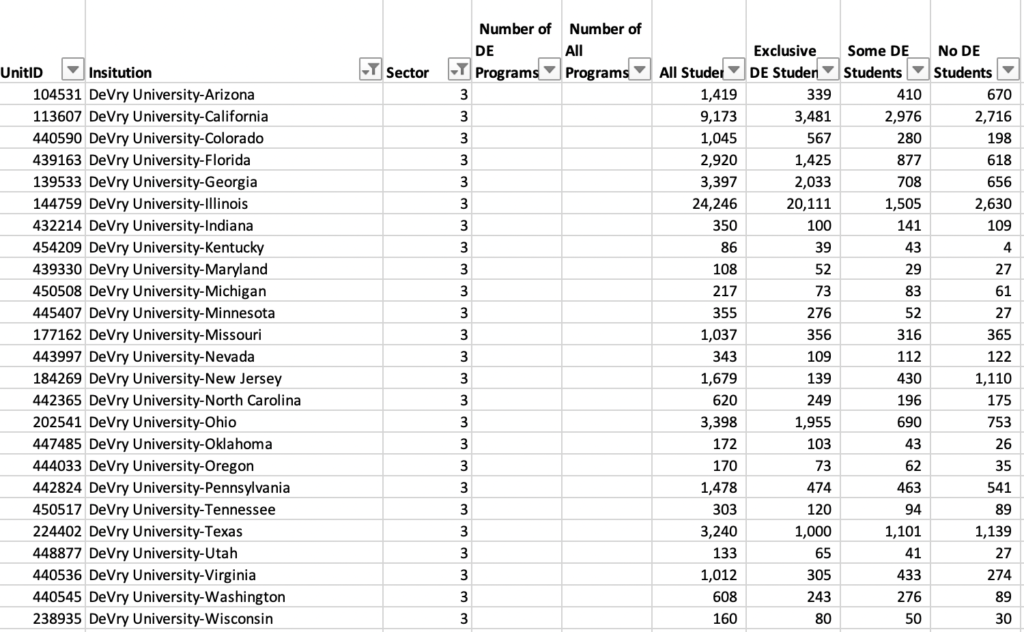

Also consider DeVry University, which does not list a centralized online campus yet has significant online presence. For whatever reason, they report the student enrollment data per campus, but they did not fill out the Completions program-level data at all. Zero supply of online education in AEI’s approach.

My therapists jumped in at this point and convinced me to not fully duplicate the AEI findings (serenity now!!!). What’s important here is that the basis of AEI’s description of online education supply, using institutional metrics that are dubious and ignore how the for-profit sector works, is flawed and misleading. Technically they used data in IPEDS, but they misunderstood its usage and limitations.

Yes, there are valuable parts of this report. But like the GMU and Stanford reports, the flaws in analysis make it very difficult to separate the good from the bad and the ugly. This type of report from well-funded organizations aimed at policy-makers should inform, not confuse, but yet again we are faced with some serious flaws. We need better.

After talking to you Phil, the oddity of the 50% discussion being front and center hit me even more. The lifting of the 50% rule had an impact on only a small number of institutions. Several for-profits and a small number of non-profit and public universities. The vast growth in distance learning has primarily been in institutions that get nowhere near the 50% mark, so the change in that rule was not a direct influence in their decision to enter the distance education market. To place it front and center seemed odd to me and not a real reflection of the motivations for most college leaders.

Russ – good point (and bumping into post body).