Caroline Hoxby from Stanford University just published a working paper for the National Bureau of Economic Research (NBER) claiming to analyze “The Returns to Online Postsecondary Education”. tl;dr This report is a hot mess that that conflates online students, enrollments, programs, institutions and uses a bizarre and misleading data set for its analysis.

The headline for most coverage of this report will likely follow the highlighted section of the abstract:

This study analyzes longitudinal data on nearly every person who engaged in postsecondary education that was wholly or substantially online between 1999 and 2014. It shows how much they and taxpayers paid for the education and how their earnings changed as a result. I compute both private returns-on-investment (ROIs) and social ROIs, which are relevant for governments—especially the federal government. The findings provide little support for optimistic prognostications about online education. It is not substantially less expensive than comparable in-person education. Students themselves pay more for online education than in-person education. Online enrollment usually does raise a person’s earnings, but almost never by enough to cover the social cost of the education. There is scant evidence that online enrollment moves people toward jobs associated with higher labor productivity. Calculations indicate that federal taxpayers fund most of the cost of online postsecondary education and are extremely unlikely to recoup their investment in the form of higher future tax payments by former students. The evidence also suggests that many online students will struggle to repay their federal loans.

I’m all for solid research to inform public policy discussions, but it is deeply problematic when the underlying data is such a mess that it’s nearly impossible to separate the baby from the bath water. What are some of the problems? Let’s just start with the simpler “exclusively online” analysis.

Nearly Every Person

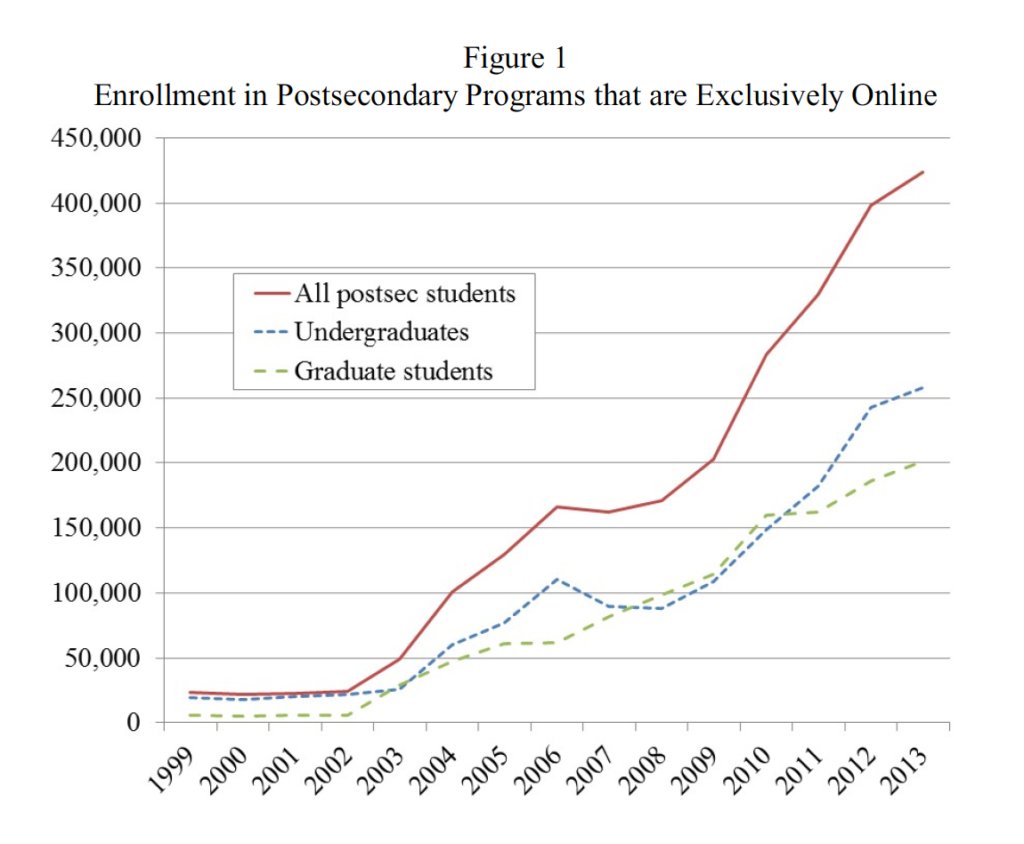

The abstract and paper start with the claim to look at “nearly every person who engaged in postsecondary education that was wholly or substantially online between 1999 and 2014”. But go to figure 1, and you see 424 thousand students “exclusively online” in 2013 (~254 thousand undergrad and ~150 thousand grad).

The data source for enrollments is the Integrated Postsecondary Education Data System (IPEDS), which has been the basis for our analysis of online education here at e-Literate as well as for the Babson Survey Research Group and WCET. We’ve done the data analysis, and for Fall 2013, the IPEDS data showed far different numbers, as seen in our post here. There were ~2.7 million exclusively online students that year, with ~2.0 million under grad and 670 thousand grad. That is an enormous different coming from the exact same data set – how did we get from 2.7 million to 424 thousand?

The fundamental flaw in the NBER paper is that in the effort to translate institution-level data to student-level data to allow tracking of IRS data for returns on investment, the researcher uses a bizarre and misleading definition of “exclusively online” and “substantially online”. For simplicity, let’s just look at exclusively online.

IPEDS asks postsecondary schools the following: (1) Are all programs at your institution offered exclusively via distance education? (2) How many degree/certificate-seeking undergraduates are (a) enrolled exclusively in distance education courses, (b) enrolled in some but not all distance education courses, (c) not enrolled in any distance education course? (3) Repeat question (2) for non-degree/certificate-seeking undergraduates and for graduate students.

A student is classified as attending “exclusively online” if the answer to question (1) is “yes” or if the probability that he or she is enrolled in distance education is 100 percent based on the answers to questions (2) and (3). For instance, if a student were enrolled in graduate coursework, and all graduate students were enrolled exclusively in online courses (possibility (2)(a)), then the student would be classified as exclusively online. Note that undergraduate and graduate students at the same institution could be classified differently.

I was able to roughly duplicate these results, of 424 thousand exclusively online students in 2013 by following these rules. The results:

- Total enrollment from any school that self-identifies as distance education (DE) only, or grad enrollment where the number of exclusively-DE grad students equals the total number of grad students, or undergrad enrollment where the number of exclusively-DE undergrad students equals the total number of undergrad students. Think Western Governors University.

- The rules exclude any enrollment at all where there is a mix of exclusively-DE grad students or undergrad students. Think Liberty University. Despite its 69 thousand exclusively online students and its 5 thousand face-to-face students, none of those enrollments count in this study. This is ludicrous, and the vast majority of exclusively-online students in this country attend a college or university that also offers face-to-face programs.

- Consider that the University of Phoenix classifies all of its online students in the UoP-Online Campus, whereas DeVry University classifies all of its online students to the local traditional campus. All of the University of Phoenix are considered “exclusively online” in the NBER study and none of the DeVry students are. Both for-profit systems, but very different results.

This is how you get from 2.7 million to 424 thousand “exclusively online” students. And the methodology is so arbitrary, the problem is not as simple as saying “oh, this is really about for-profit students. There is no easily-understood subset. And remember that the paper claims to study “nearly every person who engaged in postsecondary education that was wholly or substantially online between 1999 and 2014”.

The “substantially online” definition is even worse, based on probabilities derived from misapplied IPEDS data.

A student’s coursework is classified as “substantially online” if the probability that his or her courses are online is greater than 50 percent where the probability assigned to option (2)(a) is 100 percent, option (2)(b) is 50 percent, and option (2)(c) is 0 percent. Unfortunately, it is not possible to classify substantially-online experiences more precisely. Clearly, the substantially online category is imprecise and contains students with a variety of online experiences.

This “substantially online” category does capture Liberty University and DeVry University, for example, but it misses the difference between a subset of students taking exclusively online courses and students taking some online courses within a face-to-face program. If you want to study online, you need to view Liberty University’s 69 thousand online students as what they are – exclusively online – and their 5 thousand face-to-face students as what they are.

For what it’s worth, consider that in 2013 there were an additional 2.8 million students in the US who took some of their courses online but not all.

Update: When you total up the 424 thousand “exclusively online” students with the 1.1 million “substantially online” students, you get roughly 1.5 million total online students for 2013. The IPEDS data for that year shows 2.7 million exclusively online students and 5.5 million taking at least one online course. By any measure this report does not look at “nearly every person” who took online education coursework.

Conflation Elation

The report’s description is a mess on terminology. “Exclusively online” and “substantially online” are associated with students, courses, programs, institutions, and a sector. If you take the trouble to look at the methodology, the actual analysis is based on total grad enrollments or total undergrad enrollments at institutions which make it through the bizarre IPEDS filtering method described above.

Questionable Trends

I also have a problem when a report makes simple offhand comments not backed up by its own data [emphasis added].

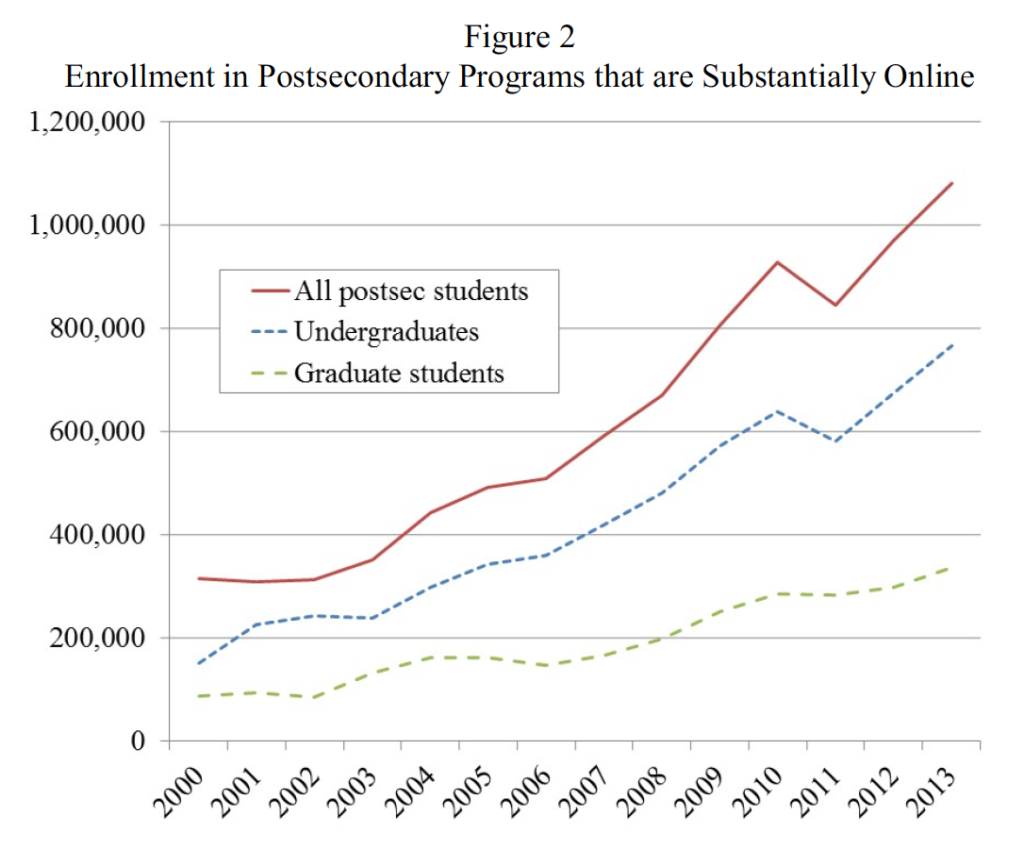

Online postsecondary enrollment has grown very rapidly in recent years. Figures 1 and 2 show the number of students enrolled in coursework that is, respectively, exclusively and substantially online. (The exact definition of substantially online is given below, but think of it as more than half online.) Both figures show that enrollment grew dramatically after 2005. This is not an accident or an effect of broadband access. Rather, 2005 corresponds to the year in which the U.S. Department of Education eliminated the “50 percent rule” that required an institution’s enrollment to be at least 50 percent in-person for its students to qualify for federal tax credits, tax deductions, grants, loans, and other financial aid. This rule constrained the growth of online education because an institution had to recruit and have a campus (or campuses) to support one in-person student for each online student.

In the case of figure 1 above (exclusively online), there is no apparent increase in enrollment until 2008 / 9. In fact, the data levels off from 2005 – 2008, with slowing growth. What about figure 2 (substantially online)?

In this case, enrollment growth seems to continue the same trajectory around 2005, with some acceleration in 2008 or 9, but much smaller than for exclusively online growth.

Did the 2005 change to the “50 percent rule” affect online education? I’m sure it did, but the data in these figure do not support the claim, which is repeated later in the report.

In section 2, I define online postsecondary education and describe its explosive growth since 2005.

Elastic, Whatever

The reports main goal is to analyze whether the “returns” of online postsecondary education, in terms of increasing salaries and economic benefits, is better or worse that traditional face-to-face education. For the “control group”, the author chooses non-selective institutions with low online enrollments. [emphasis added]

Finally, I classify a student as hardly online and at a non- selective institution if his or her school will enroll any student with a high school degree or GED in undergraduate coursework or enroll any student with a baccalaureate degree in graduate coursework. Because nearly all exclusively online and substantially online institutions are non-selective, this final category (hardly online and non-selective) is the best comparison for online schools. Indeed, recent evidence suggests that it is these institutions that are most likely to lose students to online postsecondary schools. Put another way, students who attend non-selective institutions are more elastic between online and in-person settings than are students who attend selective ones.

This view of online education – students choosing between non-selective face-to-face institutions or online institutions – takes a zero-sum approach, as if you have the same student population just choosing between institution types. This view ignores the large and growing number of working adults who can only attend college – often in degree-completion programs or masters level programs – because of an online option. Their real choice should be seen as online institution or not at all.

Why Should We Care?

This review may seem harsh, but reports like this NBER paper have tremendous influence on policy discussions. This paper will likely be referred to frequently in federal government, state government, and institutional board meetings. How can you perform analysis on such a flawed data set and a flawed understanding of the topic of online education? Hopefully I’m wrong about the potential influence of this report.

Update: See follow-up post: “One More Thing on NBER Report: Where did pre-2011 data come from?”

Also, while writing this I missed an excellent point by Sean Gallagher about the over-representation of for-profits.

Anticipating much discussion abt this important Hoxby paper 'this look right? Did quickly @RussPoulin @rkelchen Key is pg 7 "Online schools" pic.twitter.com/Llbd0mAsrp

— Dr. Sean Gallagher (@HiEdStrat) February 27, 2017

[…] ← New NBER Study on Online Education is Deeply Flawed […]