I’m seeing a lot of chatter online about the recently-released Blackboard report and this slide in particular:

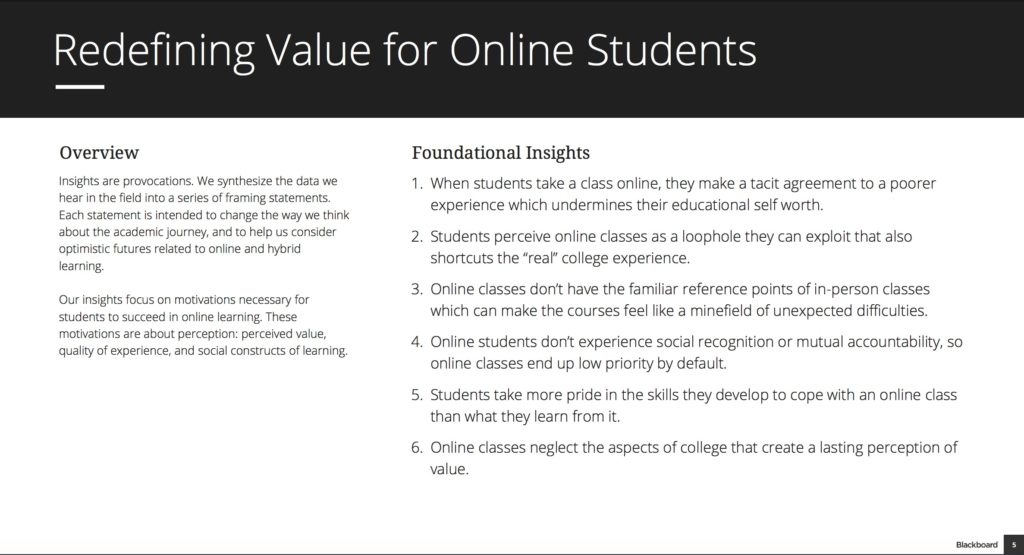

Foundational Insights

- When students take a class online, they make a tacit agreement to a poorer experience which undermines their educational self worth.

- Students perceive online classes as a loophole they can exploit that also shortcuts the “real” college experience.

- Online classes don’t have the familiar reference points of in-person classes which can make the courses feel like a minefield of unexpected difficulties.

- Online students don’t experience social recognition or mutual accountability, so online classes end up low priority by default.

- Students take more pride in the skills they develop to cope with an online class than what they learn from it.

- Online classes neglect the aspects of college that create a lasting perception of value.

To make matters more interesting, the next slide elaborated on insight 1), stating:

Most students who enroll in an online class recognize and express that they are agreeing to a lesser experience.

Did Blackboard just commit an act of unintentional honesty acknowledging that students don’t like online courses in general? That would be quite the headline. But it would not be accurate.

I was quite curious on how to interpret these findings, since the report clearly states that it is based on Contextual Inquiry and Participatory Design aimed at helping Blackboard “empathize with their unique experiences”. But which students did they talk to and should anyone extrapolate these findings beyond the “unique experiences” of the specific students participating in the study? There simply was no context presented in the report to help answer this question. As Peter Shea mentioned in the comments to Mike Caulfield’s post:

This report is interesting. I think there are issues in how methods are portrayed and in the language used in generalizing findings. We know for example that the methods are qualitative and therefore are not intended to be generalizable in any statistical sense.

I had similar questions, so I asked Blackboard for clarification. Katie Blot was kind enough to send me an extensive explanation by email with a phone call follow-up today. While I can’t share all of the details, I will paraphrase. The short answer is they talked to very few students, and it is a mistake to generalize the results. This type of design research is meant to “provoke new product and service design”. The slide shared above states:

Insights are provocations. We synthesize the data we hear in the field into a series of framing statements. Each statement is intended to change the way we think about the academic journey, and to help us consider optimistic futures related to online and hybrid learning.

Katie shared the following statement to provide more detail at my request.

We have done many of these types of studies that include students, faculty and other personas of various demographics on a variety of topics. We do it for internal purposes and, when the team finds insights it thinks may be of interest, we share through our blog. It is also important to note that this is not the only type of research we do. We also do quantitative research on large, statistically significant samples of users to understand behaviors on a larger scale. It is the combination of these two types of research, one that helps us understand what people do and the other that helps us understand why, that uniquely shape our thinking about how we need to scope and design our products and services.

While Blackboard had good intentions by taking their internal design research and sharing it publicly, they made a mistake by not thinking of how the community would interpret the implied results, especially by not addressing the question of sample size or student groups represented. I think there is a misperception in the ed tech community that you either need academic research rigor with p values and removal of statistical bias or you have nothing. I think that this type of public release of research calls for something in between – a description with enough context to allow readers to determine whether to extrapolate or not. Blackboard provided this context on the applied ethnographic nature of the research but not on the very important student sample size and groupings.

For what it’s worth, the students came mostly from traditional age (18-24) at a community college and two research universities. I could see that this research could be generalizable to those institutions or at least to the specific academic programs the students are in, but I am not at liberty to share the school names.

Since Mike Caulfield raised the question, I asked for his reaction to this explanation.

It’s not that far from what I would expect, really. The point with this sort of stuff as far as I understand it is to look for stories that you may be missing, or may not have seen as dominant, and then see if you can see them elsewhere. You’re not trying to prove something, you’re trying to to see what you might be missing about the experience. You use this to build products, and look for your confirmation there.

One of the comments to my post last night came from Karen Swan from U Illinois Springfield, where she gave a very interesting teaser for upcoming research publication.

We have an article coming out in a special issue of Online Learning on learning analytics that used around 650,000 student records from the Predictive Analytics Reporting (PAR) Framework to explore retention and progression in 5 community colleges, 5 four-year universities, and 4 primarily online institutions. We grouped students by how the courses they took in their first 8 months were delivered — only on-ground, only online, or some of both — and found that the only students hurt by taking online courses were community college students taking only online courses. In many cases, however, students taking some of both had better rates of retention and progression across all three institutional types. So it looks like not only are significant numbers of students blending their classes, but it is paying off for them.

I look forward to seeing this article.

In summary:

- Don’t interpret Blackboard’s research to conclude that online learning is a poorer experience in general; instead treat it as a provocative framing statement to help people change how they perceive students’ academic journeys, particularly for Mixed-Course programs (mix of online and face-to-face courses).

- Blackboard should provide more context when they publicly share internal research.

- Nevertheless, this is quite interesting research that frames the student perception of online courses when mixed with face-to-face courses.

[…] has been a busy boy, putting out two pieces about some Blackboard research that had gotten some negative responses and two more on a […]