Last week, Pearson announced the release of some resources they have created around science-based learning design. (Full disclosure: Pearson is a client of our consulting company, although we have not consulted with them on the subject of this post.) The resources in the release include the following:

- A 102-page rubric the company has started using for evaulating curricular products based on their effective use of learning design principles—released under a Creative Commons license

- A white paper describing how they are beginning to apply these principles to their own product designs

- A few links to descriptions of early examples of these principles as they have been applied in released products.

- A blog post providing some more background and perspective by David Porcaro, Pearson’s Director of Learning Design, whose team is behind this effort.

In the short term, you should think of this publication as the company’s effort to “show their work,” in the classroom sense of that phrase. They want you to know more about the process they go through to incorporate research-backed learning design principles into their products. They hope that doing so will increase your confidence in the value of those products. In the medium term, they also aspire to make the framework they are developing for themselves also a useful tool for the academic community, particularly as the company refines and expands on this early release of the materials.

In my view, the work itself is a significant contribution. It also is a positive indicator about Pearson’s future direction as a participant in and influencer of that community, although how strong an indicator is a much harder question to evaluate. And it gives us another clue about the co-evolution of educational institutions and ed tech vendors that we are likely to see over the next years and decades. In this post, I’m going to evaluate each of these aspects in turn.

The Thing Itself

Let’s start with what the white paper frames as the goal of the project:

In recent decades, the field of learning design has matured, bringing extensive research to bear on the creation of learning experiences and environments. Products and systems that effectively leverage learning design can deliver superior learning outcomes. As we design ever more sophisticated digital learning environments, it has become even more important to incorporate these research-based techniques into our work.

This is not easy. Well-designed educational technology has often lacked a learning sciences base, and many research-based education products have lacked a compelling user-centered design. How can world-class user experience (UX) design— grounded in a fail-fast culture—and educational research— grounded in rigor—peacefully coexist?

Three years ago, Pearson embarked on a journey to find out. This paper shares what we’ve learned and presents specific examples of how we are incorporating best-practice learning design in actual products.

This is a really important problem to address. Academic research—particularly research that can directly help humans if successful or harm them if unsuccessful or misapplied—proceeds at a deliberate pace. By design. Technology development—particularly technology that is designed to meet the needs of individual consumers or end users—takes the approach of getting early versions of products into the hands of users quickly, even if those versions are not very good, so that the designers can get feedback sooner and therefore learn and improve faster. These two very different approaches to creating an empirical feedback loop collide in educational software development. I can’t recall hearing or reading anybody framing this problem explicitly. And yet, every company that produces educational technology and every educator, student, or institution that uses educational software or evaluates its efficacy has to deal with this tension and its consequences, whether they realize it or not.

So Pearson is calling out an important and underappreciated problem. The also have a novel answer regarding who on the product development team should own that problem. On the one hand, the company is visibly highlighting the relationship of learning design-based efficacy to UX rather than to data science. This is both highly revealing and different from what we are seeing at some other ed tech companies. As a discipline, UX is anthropological. It’s human-centric. Data science isn’t irrelevant or even peripheral to educational software design any more than brain science is peripheral to research on effective educational practices. But there’s a lot that happens in between theory and application, and the connections are not necessarily direct. By positioning their learning design project relative to their UX work, the company is making the focus visibly human-centric, not only in the product designs themselves but in the design processes they employ.

On the other hand, learning design in Pearson is also not owned by the editors and content experts. In some companies, the terms “learning design” and “instructional design” are used interchangeably. But the term “instructional design” has strong associations (whether accurate or not) with the authoring and presentation of content. So companies that treat “learning design” the same as “instructional design” often incorporate their experts into the content development process. Pearson’s association of learning design with user experience design in their public discussion is not just an intellectual exercise. Their learning design team reports to the product side of the house, not the editorial side (although they work with both sides closely). In setting up the relationships this way, the company has made an important decision that incorporating learning design into their products will not be confined to how content is presented but instead will focus on facilitating the goals of students and educators through both content and functionality. (We’ll see more evidence of this when we look at the rubric itself.)

In his blog post, Porcaro elaborates on the contribution that Pearson thinks it can make and, in doing so, provides some more connections between Pearson’s view of learning design and UX:

As a part of this movement, Pearson is pioneering the application of learning sciences to education products at scale. For decades, many education research projects focused on basic or evaluative research, leading to discoveries shown to impact learning, but failing to do so at scale. On the other hand, many educational technology products have been built on solid user experience and market research, but have failed to impact learning. In the learning experience design team, we’re implementing a principle-based design process in which we apply design-based research methods to a variety of Pearson products across disciplines, supporting the outcomes of millions of learners globally (building on such efforts as Clark & Mayer, 2002; Gee, 2007; Koedinger, Corbett & Perfetti, 2012; and Oliver, 2000).

Using both the design thinking methods of user experience (Kelley & Kelley, 2016), and the design-based research methods of the learning sciences traditions (Reeves & McKenney, 2013), we’re building, applying, and refining a set of forty-five Learning Design Principles. By doing so, we’re working at the nexus of education research (i.e., products based on research) and product efficacy (ie, research-based products that evidence impact on outcomes). In that messy and exciting space of innovation, we’ve established a design function and process that allows us to build products that meet user needs, are delightful and usable, and, most importantly, impact learning.

Note the first sentence: “Pearson is pioneering the application of learning sciences to education products at scale.” Don’t get hung up on the word “pioneering.” The educator community has justifiably developed a hyper-sensitive gag reflex to vendor language that has even a hint of grandiosity. But take the word in the full context of the release. The final heading of Pearson’s white paper is this:

Learning design: It’s hard, but it works

Wait. What?

Learning design: It’s hard, but it works

No robot tutor in the sky? No Netflix of education? No disrupting education?

Nope. The emphasis throughout these materials is on academic research and on methodically developing a craft for its effective application. It’s pretty humble in tone.

The truth is that we really, genuinely don’t have a mature discipline for applying learning sciences in ways that have the ability to move beyond individual classroom experiments and impact many students’ lives. “Scale” is not an industrial term here but an expression of ambition for learning impact. In the white paper, Pearson formulates this problem—or at least an important component of it that affects the development of software that could help—as the impedence mismatch between educational and UX research methods. So a charitable synonym for Porcaro’s use of “pioneering” here might be “bushwhacking.” They are attempting to clear a trail through some dense underbrush.

And how are they doing that? We’re going to get to the details momentarily, but notice that Porcaro cites academic literature from both UX and learning science research. And not just any research. This is research on solving the problem of bridging from theory to practice. For example, the Koedinger, Corbett, & Perfetti paper he references is specifically about finding the right level of granularity and generalizeablity of learning research to be broadly applicable and impactful in practical applications. This specific methodogical grounding is going to turn out to be the first link in a chain of different research approaches that Pearson is connecting in their attempt to bushwhack through the problem of the “application of learning sciences to education at scale.”

If there’s one word in Porcaro’s sentence that’s a little more gradiose than the actual work justifies, it’s “education.” What Pearson is really trying to do, first and foremost, is apply learning sciences to designing educational technology products at scale. The difference is squishy because ed tech products can only be considered useful or effective in a given educational context with a given set of educational goals. So you can’t fully decouple applying learning science research to educational products from applying it to education writ large. But to repeat the punch line of the previous quote,

In that messy and exciting space of innovation, we’ve established a design function and process that allows us to build products that meet user needs, are delightful and usable, and, most importantly, impact learning.

The rubric Pearson is sharing is clearly designed to be a tool for their internal teams to weave research-backed learning design principles into the product team’s basic design process.

Speaking of the rubric, let’s get to it.

It consists of 45 principles of learning design that Pearson thinks are important to consider when evaluating whether their product designs have a credible chance of having learning impact. Notice the wording there. You can’t know if those products will actually have a learning impact until they are tested in the real world with real students. These principles are another link in the chain of applying learning sciences to designing educational technology products at scale. They are not the final link. But they are an important one because they get the product design team to be asking good educational questions from the beginning.

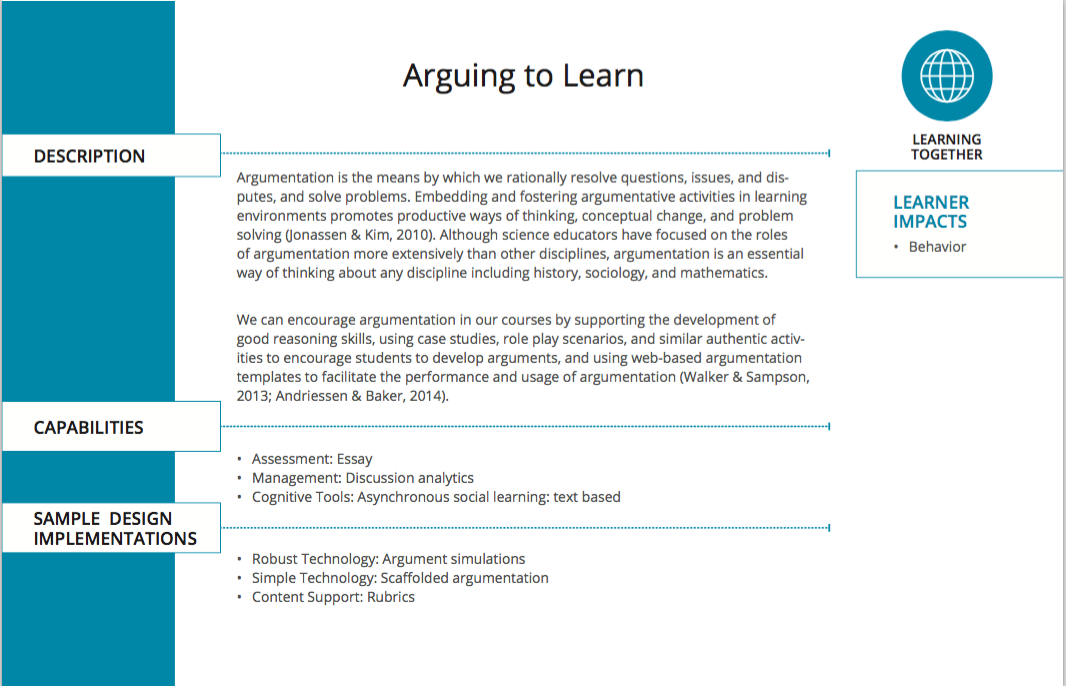

Each principle starts with a card that gives an overview. For example, here’s the overview card for the learning design principle “Arguing to Learn:”

In this first release, Pearson has clearly given us a document that was written primarily for internal use. There is no user’s guide. Some of the elements are opaque. Even so, a fair amount of it is clear without additional information. We have a basic description of the principle, which includes one or more references to the academic research on which it is based. (There is a multi-page bibligraphy at the end of the document.) We are given a “simple technology” sample implementation of “scaffolded argumentation”—for example, a description and example for students of a developed argument—and a “robust technology” sample implementation of “argument simulations”—maybe a chat bot that asks students questions intended to elicit argumentation and evidence. Notice how the implementation could lean either toward static content to be animated by teacher and student activity or toward functionality that enables the product to become an active class participant. If you’re a textbook editor trying to get up to speed on ed tech product design this is helpful because it makes the connection between a principle that may know intimately from your textbook product design and translate it into a world of software that can sustain interactions with humans in increasingly sophisticated ways.

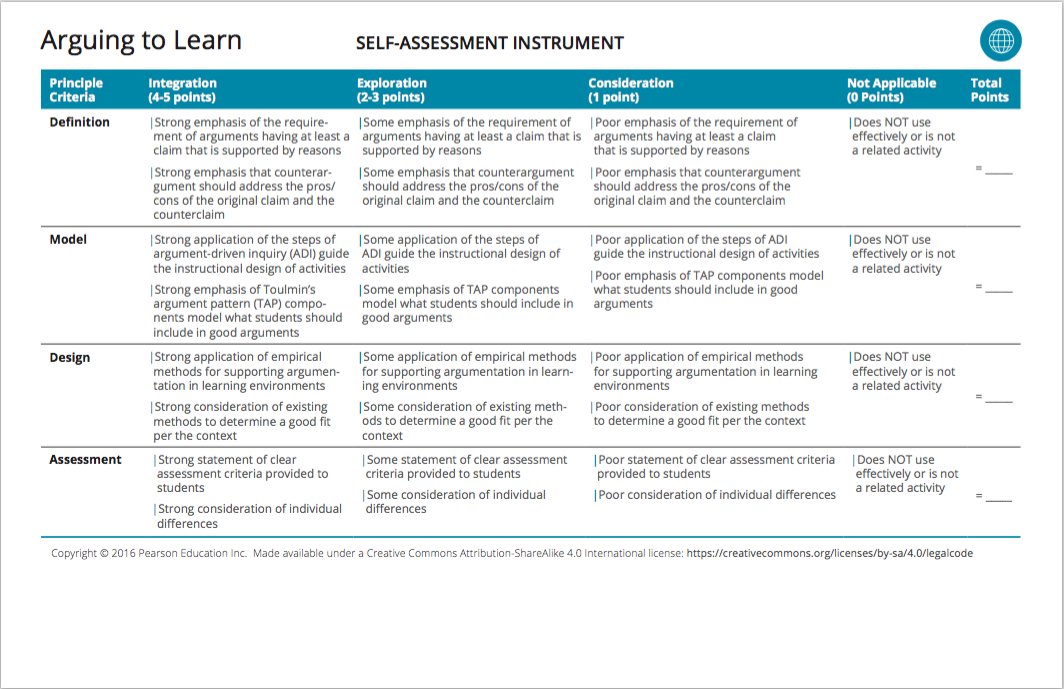

Each principle then has rubric (or “self-assessment instrument”) like this one:

Here again, not everything is unpacked for us. For example, what is the “Argument Driven Inquiry (ADI) Guide”? Is that something in one of the research references? Is it a Pearson-internal artifact? I’d have to do more digging to figure that out. But presumably, if you’re on a team designing a product for which the “arguing to learn” learning design principle makes sense to support, you would be briefed by the learning design team or provided with appropriate documentation to read.

But we don’t have to understand all the details to get a basic picture of how these cards are intended to help embed research-based learning design principles into product design efforts where most of the team members are not learning design experts. Imagine each product team in Pearson sitting down in a meeting together, going through these cards one by one, applying the rubric to their product design, and brainstorming ways in which they could improve their scores. That’s what we’re talking about. That’s what Pearson wants us to know that they are doing.

The Value of the Thing Itself

As I wrote at the top of the post, the short-term value of this document is primarily as a tool for understanding and evaluating Pearson’s efforts to improve their product efficacy. As such, it is already paying dividends for them. When the rubric was published, I posted a simple tweet with the URL of their reporte site and the comment “This is more significant than it appears.” That tweet got a surprising number of responses within a couple of hours, including from people who normally have no interest in Pearson whatsoever. One reply was by Lisa Janicke Hinchliffe, a professor and librarian at the University of Illinois at Urbana-Champaign, who took issue with the particular research base that the rubric used for information literacy. David Porcaro responded by inviting her to point him to a research base that she believes is superior. This is a clue to a larger opportunity that Pearson should think about carefully.

Ever since the company started their efficacy push, they have been talking about having their products “audited” by a third party to verify that they are, in fact, educationally efficacious. And while a lot of the original hype and organizational machinery behind the effort have fallen away, Pearson still intends to have Ernst and Young audit the efficacy of all their products. That always struck me as weird. First of all, while I respect the general intellectual caliber of the consultants and organizational capacity of E&Y, I don’t see how they can be terribly useful in this sutation. Sure, they can bring an army of really smart Harvard MBAs to see if the measures of efficacy that Pearson has established have been met. But there is no equivalent to the “Generally Accepted Accounting Principles (GAAP)” in this field. In what way is E&Y qualified to judge whether the measures Pearson has chosen are the right ones?

But equally importantly, who is this audit for and what is it supposed to prove to them? Investors respect E&Y but probably don’t care about efficacy reports or, at the very least, won’t know how to interpret the implications of the results on the value of their investment in Pearson stock or bonds. Educators may care about the evaluation of Pearson’s products but won’t trust E&Y to give them that. I just don’t understand what Pearson CEO John Fallon is thinking here.

(Mr. Fallon, if you’re reading this and would like to enlighten me about your thinking—about E&Y or anything else—feel free to contact me. Any time. Really.)

Academia doesn’t really do “audits.” It does “peer review.” That little Twitter conversation between Porcaro and Hinchliffe was the 21st-Century evolution of peer review in microcosm. I will also note that EdSurge must have seen the stream of replies from my tweet (as well as Pearson’s press release) because their article picked up on many of the replies and the article’s author, Marguerite McNeal, followed up to get longer reaction quotes from some of the…er…twitterers. (Tweeters?) Hopefully the examination of the rubric that flows from the growing attention to the publication will lead to more feedback that helps Pearson improve its learning design processes and therefore its educational impact.

It also suggests a partial solution to a foundational flaw in Pearson’s “efficacy” formulation that I raised in my original post on the topic:

Let’s think some more about the analogy to efficacy in health care. Suppose Pfizer declared that they were going to define the standards by which efficacy in medicine would be measured. They would conduct internal research, cross-reference it with external research, come up with a rating system for the research, and define what it means for medicines to be effective. They would then apply those standards to their own medicines. And, after all is said and done, they would share their system with physicians and university researchers in the hopes that the medical community might be reassured about the quality of Pfizer’s products and maybe even contribute some ideas to the framework around the edges. How confident would we be that what Pfizer delivers would consistently be in the objective best interest of improving health? This is not entirely hypothetical; much of the drug research that happens today is sponsored by drug companies. Unsurprisingly, this state of affairs is viewed by many as deeply problematic, to say the least. It certainly doesn’t help the brand value of Pfizer. But at least much of that medical research is conducted by physicians and academic researchers and is subject to the scientific peer review process. Pearson is creating their framework largely on their own, selectively inviting in external participants here and there….

If Pearson were to say to faculty, “Here’s what we think we know about the efficacy of this product, here’s what we don’t know yet, and here is how we are thinking about the question,” they might get a number of responses. Maybe they would get, “Oh, well here’s how I know that it’s effective with my class.” Or “The reason that you don’t have a good answer on effectiveness yet is that your rubric doesn’t provide a way to capture the educational value that your product delivers for my students.” Or “I don’t use this product because it has direct educational effectiveness. It frees me up from some grunt work so that I can conduct activities with the class that have educational impact.” Most of all, if you’re John Fallon, you really want faculty to say to their sales reps, “Huh. I never thought about the product in quite those terms, and it makes me think a little differently about how I might use it going forward. What can you tell me about the effectiveness of this other product that I’m thinking about using, at least as Pearson sees it?” And you really want your sales reps to run back to the product teams, hair on fire, saying “Quick! Tell me everything you know about the effectiveness of this product!”

Pearson won’t get that conversation by just publishing end results of their internal analysis when they have them, which means that they have a high risk of failing to align their products with the needs and desires of their market if they think about the relationship between their framework and their customers in that way. I don’t think Pearson fully gets that yet.

The learning design work that Pearson has released so far doesn’t get the company all the way to a real collaboration with the academic community, but it provides the potential basis for one. It builds on various bodies of academic research—conspicuously and appropriately cited—and then creates connective tissue of a process for incorporating that research into end-to-end product design. Funnily enough, in the aforementioned EdSurge article, Pearson’s Managing Director, Global Product Management & Design Curtiss Barnes (who also happens to be David Porcaro’s manager) said,

We want our end-to-end process to conform to these principles.

So there you go. Pearson isn’t claiming to have come up with revolutionary products or revolutionary research or really, revolutionary everything. They claim to be doing the hard work of knitting together research done by the academic community, testing out their framework on their own products and processes, and contributing both their labor and their scale of operation to propose and test ways of making that research practically beneficial to many more students and educators.

As with any peer-reviewable experiment, they have to start by showing their work. David Porcaro started creating the academic analogue of an audit trail with his blog post by citing the methodological literature upon which Pearson is building its effort. The rubric provides the next step in the audit trail by laying out the principles Pearson intends to apply with research citations and a framework for application. As the third step, the company is beginning to point to a few examples of the applications of those principles in shipping products. Assuming that evidence base continues to fill out, the next step in the audit trail will be research of product effectiveness in classes that is connected to the application of those learning principles. And my guess is that the academic community could provide a much more effective and more credible end-to-end audit following that chain (at a much lower price) than a big accounting firm.

But more importantly, one huge difference between an “audit” and “peer review” is dialog. Doing this work out in the open, in cooperation with the academic community affords Pearson the potential of not just validating their efficacy but improving it, while building customer trust relationships at the same time.

This brings me to the fact that Pearson released their rubric under a Creative Commons Share-Alike license. There is a lot that could be criticized about this initial release as an attempt at academic collaboration. Much conversation on Twitter was devoted to the fact that the document was a PDF, for example. It’s hard to modify a PDF, which makes it less than ideal for certain kinds reuse. And as I mentioned earlier, the cards are missing a lot of contextual information and basic instruction that could make them more useful. Nor has Pearson provided any effective channel or forum for dialog. There are many areas we can identify for potential improvement.

But set that all aside for a moment and ponder the fact that Pearson has released a hefty, research-based artifact, one that took their team months to put together and refine, one that they intend to use as an integral part of their product development process, under a Creative Commons license. Consider that some of the principles and criteria in their rubric overlap with instruments released by academic organizations like Quality Matters and the Online Learning Consortium. I can tell you from direct personal experience that Pearson has a serious and significant learning science talent pool at the company, not just on the learning design team but across their larger product design group. I know these folks. I’ve worked with them. They’re for real. Pearson probably has a larger collection of talent and experience across the various relevant disciplines and research areas than all but a few top research universities. Having this team share what they’re doing under an open license with some of these academic organizations, not to mention individual academic departments and university instructional design teams, is of non-trivial value.

Personally, my biggest complaint about Pearson’s 1.0 (or even beta) version of their sharing effort is the particular Creative Commons license that they have chosen. Academic membership organizations like Quality Matters and the Online Learning Consortium have to develop sustainability models, which means putting some things behind a pay wall. If Pearson were to remove the Share-Alike clause from their license, then these organizations and others like them could benefit from Pearson’s contributions by incorporating them into value-added membership services.

But I’d call that a quibble. Pearson has made what appears to be a good-faith effort to contribute here without puffing themselves up in the process. They have earned a modicum of credit and some patience to see what comes next.

Will the Real Pearson Please Stand Up?

Whenever I write a relatively positive analysis post like this one, or like my original analysis of Pearson’s efficacy strategy, I inevitably hear from academic folks who complain that their experience of Pearson is lousy products, poor support, crazy or incoherent marketing, clueless sales reps, or bad-faith lobbying for more standardized testing. They tell me that the Pearson I am writing about bears no relation to the “real” company that they encounter in their daily lives. “Come on! You know what they’ve done. Can’t you see what’s really going on?”

When I write a strong critique of something the company has done, I inevitably hear from Pearson employees who complain that the dumb comment or the ill-advised marketing piece I’m criticizing is completely disconnected from the real, serious work that the company is undertaking. They also tell me that the Pearson I am writing about bears no relation to the “real” company they encounter in their daily lives. “Come on! You know what we’re doing. Can’t you see see where we’re really going on?”

So which is true? Which is the real Pearson?

The first one. The second one. Both. Neither. Vendors and customers alike struggle to understand that there is no single objective answer to that question.

To illustrate this point with a simpler example before we get to the universe-unto-itself that is Pearson, let’s start by looking at a much smaller company: Blackboard. A while back, Blackboard announced that they were going to come out with this new thing they called Ultra. Phil and I spent a lot of time looking at it. We grilled the executives. We grilled the architects. We went to their UX labs and deliberately did things they told us not to do in the proof-of-concept demo.

We came away impressed. There are some seriously smart people working on Ultra. People who are good human beings, too. And the work-in-progress they showed us seemed both innovative and substantial. So we were cautiously optimistic.

What happened?

- The CEO, prompted by the CIO, made public promises of delivery dates that the product team knew from the beginning were wildly unrealistic.

- The Chief Product Officer came up with a product matrix that conflated the new user experience with the new SaaS option

- The Chief Marketing Officer developed marketing materials that made a circuitry diagram for the space shuttle look simple by comparison and generally made an already obstruse product roadmap completely incomprehensible.

- Nobody explained any of what was going to the sales force or the support team. Accordingly, some of the employees who talk to customers every day told them that Blackboard 9.x, which was the only version of Blackboard that customers could actually buy and use, was being killed off. Which wasn’t true. None of the reps could tell the customers anything about when Ultra would be ready or even what it really is. And some avoided talking about Ultra altogether in their sales pitches.

- Customers noticed that developer resources were being shifted from Blackboard 9.x—the only version of the product that customers could buy or use that some but not all of them had been told would be discontinued—to Ultra—the product that nobody could actually define to customers, much less tell them when, if ever, they would be able to buy and use it.

- The CEO, CIO, Chief Product Officer, and Chief Marketing Officer all quit or were fired. (Most were fired.)

- The new CEO is doing…stuff. We don’t really know what the impact will be yet. It’s too soon to tell.

- Ultra is still not not available for customers to adopt as their primary institutional LMS. (Or LMS experience. Or something. We’re still not entirely sure how to explain what Ultra is and isn’t.)

There are still good, smart people doing good work inside Blackboard on developing functionality that has not yet been released to customers. That much is real. Ultra may turn out to be smash hit. Or it may turn out to be a train wreck. Or something in between. We don’t know. We won’t know until customers are using and are able to tell us whether it helps them in meaningful ways. In the meantime, who represents the “real” Blackboard? Who can tell us the “true” story? And how “real” is Ultra in any way that matters to customers?

Blackboard has one flagship product plus a small handful of others. Pearson has probably thousands of products and services. Blackboard has about 3,000 employees. Pearson has about 40,000. That is roughly the same number of people as the total population of Burlington, Vermont. You may think that’s not a good comparison. After all, Pearson has a CEO. It has a corporate structure. It has policies. It can hire and fire people. More than any town or city, surely it must have a defineable and consistent purpose and direction…?

You might be surprised.

Is this learning design release the next stage in John Fallon’s efficacy master plan? Is it an unforeseen evolution that will nevertheless steer the company in new directions? Is it one of a million snowflakes of activity in the incoherent blizzard that is Pearson’s annual output? Is it something else? Something in between? Some combination?

I don’t know how to get a “real” answer to those questions. I know how to get an official answer. That’s easy. I could shoot an email to Curtiss Barnes and have a quote by the end of the day. And he would tell me the honest truth from his perspective. But would I get the same answer if I asked somebody who has a more regular and direct communication channel to the customers? Maybe somebody in the marketing department? Or a sales rep? What if I asked an editor? And if I asked a couple of marketing people, a couple of sales reps, and a couple of editors, would I get consistent answers? If I asked ten people—let’s even say that they were all mid- to high-level executives at Pearson—would I get one answer? Or ten different ones? If I asked CEO John Fallon these questions, what would he say, and how consistent would it be with these other answers? For that matter, how reflective would any of those answers be of changes that students and educators will experience in a year or two?

(Mr. Fallon, if you’ve read this far, then either you’re an insomniac masochist, you’re stuck in an airport or a bathroom somewhere, or you really do think that there is non-trivial value to this analysis. I really, truly would love to hear your thoughts on these questions. And if you’re stuck in an airport or bathroom somewhere, you may have some time on your hands. So…you know…feel free to call me.)

Our editorial perspective at e-Literate is that the most important reality is the reality in the classroom. While we know and care a lot about what’s going on under the surface in these companies, nothing is entirely real to us in vendorland unless and until it gets out of vendorland and into the real world of education. Pearson has made a good first foray with the learning design release. I want to believe. But it’s not really real until it starts reaching students and educators consistently through multiple channels directly from Pearson (rather than just through analysts like us or publishers like EdSurge) and until those students and educators start experiencing a real difference in their lives from it. Until that happens, a dumb quote about wanting to be the Netflix of education from an executive who reports directly to the CEO is just as reflective of the Pearson that is “real” to the customers as a web site with some good learning design content on it. No official answer from the company can solve this problem. This is true for Pearson, Blackboard, and every other vendor.

The (Really) Big Picture

In a recent post, I argued that the transformation in teaching ahead of us in light of the still relatively young but maturing learning sciences is analogous to the 30-year transformation of the medical profession into one based in science during the early decades of the 20th Century. I also argued that, lacking that transformation, the ed tech industry would remain stuck in the mud indefinitely. In a follow-up post, I suggested that vendors could play a valuable role in supporting and facilitating that evolution of the teaching profession.

Efficacy wasn’t a bad analogy as a first approximation for Pearson’s new value proposition to students and educators. I give them credit for their bravery on it. (That may sound like a weird statement to people who don’t live inside the educational publisher world. Trust me; in their world, it was a bold and risky move.) But the strategy as originally formulated is inevitably doomed to failure for the same reason that it would have been impossible to develop a pharmaceutical industry in absence of a profession of skilled physicians who see pharmacology and medical sciences as essential tools for them as healers. Imagine a world in which the pharmaceutical industry “disrupted medicine” by selling prescription drugs to directly to patients without the consultation of a doctor. What would “efficacy” mean in that world? What would health outcomes look like?

Like the human body, the human mind is incredibly complex. We are still in the very early stages of understanding it. And while we all can learn a lot about how to improve our physical and mental fitness on our own and are perfectly capable of self-prescribing in many cases, there are many other cases in which details are complex enough that we need real trained experts to help us achieve the best result possible. We need the illumination of science wielded by a smart, caring, expert human. A hundred years after the beginning of the transformation of the medical profession toward this vision of what healthcare professionals should be, that transformation is still flawed and incomplete. We arguably haven’t even begun the transformation of the teaching profession, particularly in higher education. If we’re really going to do this, then we need to all do it together.

With a little more work, Pearson’s learning design framework could be very useful to organizations like Quality Matters (QM) and the Online Learning Consortium (OLC), which help a lot of faculty members and have their respect. But that’s not the end of it. QM and OLC have the most influence on faculty who teach online and on the design of online courses. One of the intriguing aspects of this framework coming from Pearson is the message that learning design principles are relevant to all of the contexts in which their products are used, whether online or face-to-face. There’s a lot of good pedagogy that doesn’t make the jump from online to the traditional classroom because educators get stuck on what to do with their in-class time (which is often unhelpfully labeled “lecture periods”). There’s also a lot of good pedagogy that doesn’t make the jump from one discipline to another. For example, I know a lot of good faculty members who care deeply about helping students develop their argumentation skills, are good at it, and would be interested to know that there is a relevant research base about facilitation techniques that work. If those educators happen to teach English composition then they probably have run across this research. But if they teach history or sociology or biology or mechanical engineering, then there’s a good chance they are still in the dark. Pearson could help change that.

This learning design release could be one nice little thing among many things—good, bad, and indifferent—that Pearson has done in 2016. Or it could be the first step down the long road of a critical company-wide course correction toward real collaboration with educational researchers and practitioners. One that helps bring about a world in which we all learn about learning together and teachers are valued as skilled professionals in the same way that doctors are today. One that is good for Pearson and good for the world.

I hope it is the latter. Time will tell.

If you are going to use my work in your analysis, you should cite me. My tweets are public and so there is no excuse for erasing me from the conversation. For others who agree, they can see the exchange here: https://twitter.com/lisalibrarian/status/808766334175678468

By the way, I’m a professor and a librarian. “Library science person” is a very odd way to characterize someone.

Fixed, Lisa. Thanks for calling that out. My bad.

Thank you for editing to make the fixes. Best, Lisa