10 days ago Arizona State University (ASU) and the Boston Consulting Group (BCG) released a report, supported by the Bill & Melinda Gates Foundation (BMGF)1, titled “Making Digital Learning Work: Success Strategies From Six Leading Universities and Community Colleges”. The basic idea [emphasis added]:

How can the use of digital technologies in postsecondary education impact students’ access to education, student outcomes, and the return on investment for students and institutions? What are the biggest challenges for an institution seeking to implement high-quality digital learning opportunities? What promising practices enable an institution to achieve impact at a larger scale? [snip]

The answers, at least in part, lie in case studies of six colleges and universities: Arizona State University, the University of Central Florida, Georgia State University, Houston Community College, Kentucky Community and Technical College System, and Rio Salado Community College. The first three institutions in this list are public research universities, representing different geographic populations and access missions. The other three institutions include two community colleges and a state-wide community college system.

These six institutions have a strong track record of using digital learning to serve large, socioeconomically diverse student populations, and each has been a pioneer in innovating to expand access to postsecondary education, improve student outcomes, and provide higher education at an affordable cost.

The methodology is a case study of each school, and the report then takes a stance on what other institutions should do.

Now is the time for leaders to champion the potential of digital learning to open the doors of higher education wider and to improve student outcomes, while operating more efficiently and at lower cost. The journey of each college or university will be unique, but the set of promising practices described in this report may serve as a useful guide for all institutions.

There are multiple methods to evaluating a school; it turns out that some are more meaningful than others, and it is always helpful to start with what students experience in actual courses. The ASU / BCG report provides useful context on course design.

At Rio Salado, 22 full-time faculty chairs develop courses with the support of a central team that includes subject-matter experts, instructional designers, media support staff, and production staff. About 1,500 adjunct faculty members teach the courses, which they can personalize by adding an introductory message or video for each module.

It turns out that I have access to two courses – ENG102 (First Year Composition) and EED200 (Foundations of Early Childhood Education) – and this gives an opportunity to better understand the student experience. What these courses indicate, however, is a troubling lack of meaningful interaction between faculty and students.

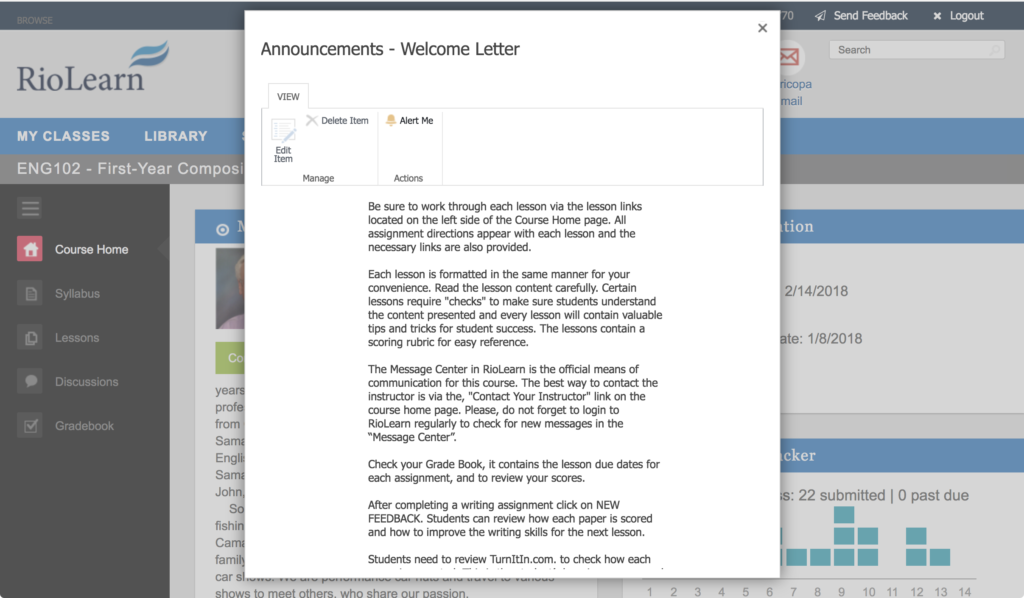

Looking at ENG102, the course materials is Rio Salado developed courseware embedded in their custom course management system, RioLearn. The materials appear to be quite extensive, and all materials are available at the start of the term in 14 lessons. To find actual due dates, students have to go to the gradebook, as no specific dates are included in the courseware.

The instructor sent out several announcements at the beginning of class, a welcome letter, and then one reminder or update message at the beginning of each month. That’s it for instructor-initiated interactions.

There were no student-to-student discussions, as the discussion board was unused for the course. There was one peer-review activity, but otherwise no interactions between students. I have been a frequent critic of threaded discussion boards, but it is certainly better to have something instead of nothing.

The rest of the interactions came in two categories: grading of assignments and responses to student-initiated messages. The primary feedback method in these two courses was faculty usage of the custom-developed Feedback Tool, which uses rubrics to grade assignments.

There were several assignments that included annotated mark-ups of the submitted papers, which appear to be the most useful feedback from instructors.

A review of EED200 shows the same course structure – few instructor-initiated interactions, use of rubric grading as feedback on assignments, and specific responses when students send questions in through the Message Center.

For both courses, the instructors typically responded to student messages within a day or two.

This approach is troubling, as both courses appear to not meet the “regular and substantive interaction” regulation for credit-bearing online courses. I have been critical about the vague standards and the egregious application by the Department of Education’s Office of Inspector General audit of Western Governors University, but these complaints do not mean that the regulation has no point. The idea is that course design and facilitation should be implemented to ensure that students are not left to figure out static course materials and to be responsible for initiating most forms of interaction. As stated in a 2014 Dear Colleague Letter on the subject of regular and substantive interaction based on competency-based education (CBE) approaches [emphasis added]:

We do not consider interaction that is wholly optional or initiated primarily by the student to be regular and substantive interaction between students and instructors. Interaction that occurs only upon the request of the student (either electronically or otherwise) would not be considered regular and substantive interaction.

Some institutions design their CBE programs using a faculty model where no single faculty member is responsible for all aspects of a given course or competency. In these models, different instructors might perform different roles: for example, some working with students to develop and implement an academic action plan, others evaluating assessments and providing substantive feedback (merely grading a test or paper would not be substantive interaction), and still others responding to content questions.

The problem with case studies is that the selection of cases to study may not be representative or appropriate to prove one’s thesis. It is possible that I happened to look in detail at two courses that are aberrations. But even so, this view is troubling, as these are centrally-developed courses (not subject to the whim of individual instructors), and both courses have little meaningful provisions for faculty-student or student-student interactions. The instructors were responsive to messages, but that is not enough to back up claims of high-quality courses. The dissonance between these two courses and what is described in the ASU / BCG report led me to take a critical look at the data.

For the next post, I’ll take the external view and look at aggregate public data on Rio Salado College on academic outcomes.

- Disclosure: Our e-Literate TV series was funded in part by the Bill & Melinda Gates Foundation. [↩]

I think it is worth making a distinction between academic outcomes (such as graduation and retention) and learning outcomes which are measures of knowledge, skills and dispositions. We can improve graduation and retention by increasing our standards for teaching and learning so everyone learns more and passes more classes, or we can achieve the same effect by decreasing our standards for teaching and learning so that more students pass their classes whether they learn or not. We need to measure learning, not just passing, and the case studies in the report do not seem to do so.